| At a glance | |

|---|---|

| Product | Iomega StorCenter ix4-300d Network Storage 4-bay (8 TB) (70B89001NA) [Website] |

| Summary | Four bay Marvell Armada based NAS with cloud backup and sharing features |

| Pros | • Supports backup to cloud, rsync and SMB targets • Higher throughput than previous Marvell-based models • USB 3.0 • Three year warranty |

| Cons | • No IPv6 support • Single volume with no RAID1, expansion or migration options • Sparse collection of add-ins • Cloud feature relies on router port forwarding/UPnP |

Typical Price: $2772 Buy From Amazon

Introduction

The last time we looked at an Iomega NAS was the 1.8 GHz Atom D525-based two-bay px2-300d, back in November. This time, we are looking at a refresh in Iomega’s lower-priced ix product line, the four-bay ix4-300d. The 300d comes in 4, 8 and 12 TB configurations. There is also a BYOD version, but it’s not sold in the U.S. We’ll be reviewing the 8 TB version (model 35566).

The ix4-300d, which replaces the ix4-200d, is based on a 1.3 GHz Marvell Armada XP CPU. The NAS comes with four Seagate 2 TB hard drives. It performs well compared to other Marvell NASes, but you’ll be disappointed if you compare it to its Intel-based siblings.

The figure below is an angle view of the ix4, with its metal cover removed. All the ix4-300d chassis is metal, except for the black plastic front panel bezel. The lone USB 3.0 port is on the front, but at least it’s not behind the drive door like on other NASes’ we’ve reviewed such as the Thecus N5550.

It also shows the ix4-300d’s side-loading configuration. Although you could remove the metal cover that is secured by two thumbscrews with the NAS powered-up, Iomega says that the ix4-300d’s drives are not hot-swappable.

StorCenter ix4-300d Front Panel and Drive Configuration

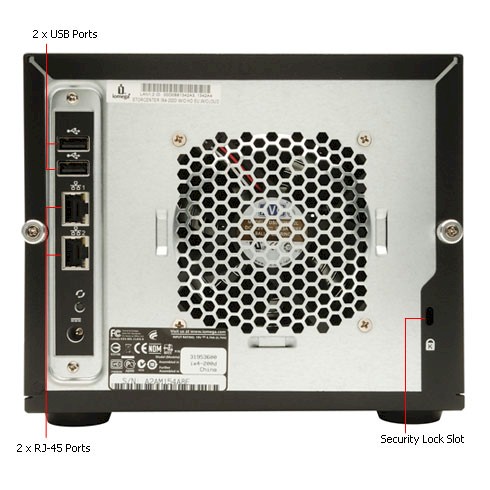

The next figure shows the rear panel, where You can see two USB 2.0 ports, power connector, dual gigabit Ethernet ports with support for failover and 802.3ad aggregation and a nice, quiet fan. Unfortunately, there is no eSATA port to attach external drives for speedier storage expansion or attached backup.

StorcCenter ix4-300d Back Panel

Inside

The figure below shows the ix4’s main board, which is based on Marvell’s Armada XP clocked at 1.3 GHz supported by 512 MB of soldered-on DDR3 RAM and 512 MB of Samsung flash. Dual Marvell 88E1318 Alaskas provide the dual Gigabit Ethernet ports and a NEC D720200F1 provides the USB 3.0 port. SATA II interface for the four drives is handled by a Marvell 88SX7042 controller.

The 8 TB version that Iomega shipped for review came with four Seagate Barracuda 7200.14 2 TB (ST2000DM001) drives that are formatted with the EXT4 file system, which is a change from the XFS file system previous used by Iomega.

ix4-300d board

Table 1 has a summary of the key components for the ix4-300d compared with its -200d predecessor and px2-300d Atom-based sibling.

| ix4-300d | ix4-200d | px2-300d | |

|---|---|---|---|

| CPU | Marvell Armada XP @ 1.3 GHz | Marvell 88F6281 Kirkwood @ 1.2 GHz | Intel Atom D525 @ 1.8 GHz |

| Ethernet | Marvell 88E1318 Alaska (x2) | Marvell 88E81116R (x2) | Realtek RTL8111f (x2) |

| RAM | 512 MB DDR3 | 512 MB DDR3 | 2 GB DDR3 SoDIMM |

| Flash | 512 MB | 64 MB | 1 MB |

| SATA |

Marvell 88SX7042 |

Marvell 88E6121 |

In 82801IB |

| USB 3.0 | NEC D720200F1 | N/A | NEC D720200F1 |

Table 1: ix4-300d component comparison

Power consumption measured 44 W with the 4 drives spun up and 17 W with them spun down. Fan and drive noise could be classified as medium with both fan and drive noise audible in a quiet home office.

Features

This bulleted feature summary below provides a bit more feature set detail.

Storage

- Network file sharing via SMB/CIFS, NFS, AFP

- WebDAV support

- Windows DFS support

- HTTP / HTTPS file and admin access

- FTP, SFTP and TFTP servers

- JBOD, RAID 0, 5, 10 volumes *See note below

- iSCSI targets with ISNS support

- EXT4 filesystem

Backup

- Network Backup: Schedulable (smallest interval is one day) to / from rsync targets and SMB/CIFS shares

- Apple Time Machine backup

- Auto file copy from PTP-enabled digital cameras

Media

- Recording and viewing of up to 16 IP cameras [supported models] via MindTree SecureMind (1 camera license included)

- AXIS Video Hosting System support

- UPnP AV / DLNA media server (Twonky Media)

- Photo slideshow

- iTunes server

- BitTorrent downloader

- Auto upload to Flickr

- Auto upload to Facebook

Cloud

- Secure Web-based remote access and site-to-site backup (Personal Cloud)

- Amazon S3, Mozy, EMC Avamar, EMC Atmos cloud backup

Other

- Dual Gigabit Ethernet interfaces with VLAN and failover modes

- SNMP support

- Joins NT Domain / Active Directories for account information

- Compatible with Windows Server 2003, Windows Server 2008 R2, VMware VSphere ESX5 iSCSI and NAS, XenServer™, 5.5, 5.6 (w/ MPIO), iSCSI & NFS

- User level quotas

- Email alerts

- Logging

- File upload via Bluetooth

- USB printer serving

- UPS shutdown synchronization via USB

Iomega hasn’t tweaked the OS since our last look at the px2-300d. The apps haven’t changed, but here they all are in the All Features screenshot below.

All Features

Volume configuration is fairly unsophisticated compared to other RAID 5 NASes. First, there is no RAID level migration or expansion. If you want to do either, you need to delete the existing volume. Once you choose a RAID 5 or 10 volume, you’ll need to wait a long time while it syncs. Full RAID 10 sync of the 8 TB review sample took 10 hours and 45 minutes!

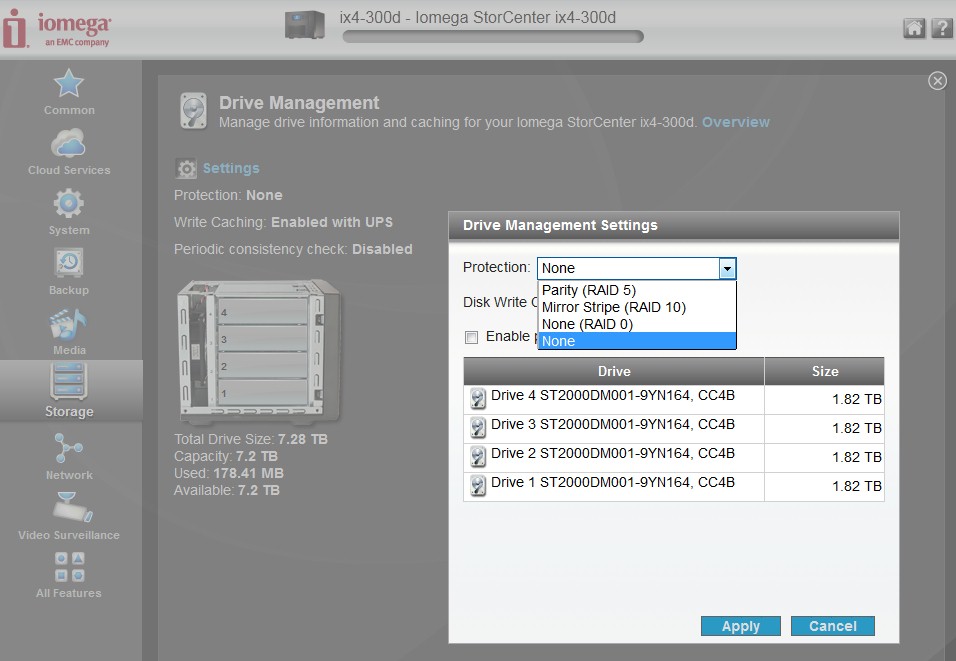

The Drive Management screenshot below shows the volume creation options. Note the absence of drive select checkboxes and no RAID 1 option. So you can’t create multiple RAID 1 or 0 volumes, nor can you create a three-drive RAID 5 volume with a spare drive. The None option actually creates a single JBOD volume with all four drives. The default for write caching is Enabled with UPS as shown, but you can also select Always enabled and Always disabled.

All Features

I found you can acutally create a RAID 1 volume, by pulling two drives. But you can’t create two RAID 1 volumes or a mix of RAID 0 and 1. No matter how many drives you put in, you can create only one volume.

A few other competitive weaknesses remain including:

- You can limit access to shares by user and/or group. But you can’t control access by service. So you can’t shut off FTP access to certain shares, for example

- No iSCSI encryption or authentication and no target multiple connects

- No IPv6 support

- Unlike px-series NASes, the ix4 doesn’t support volume or folder encryption.

If you want more screenshots of the ix4’s features, check the ix2-dl review gallery.

Performance

The StorCenter ix4-300d was tested with 3.3.4.29856 firmware using our standard NAS test process.

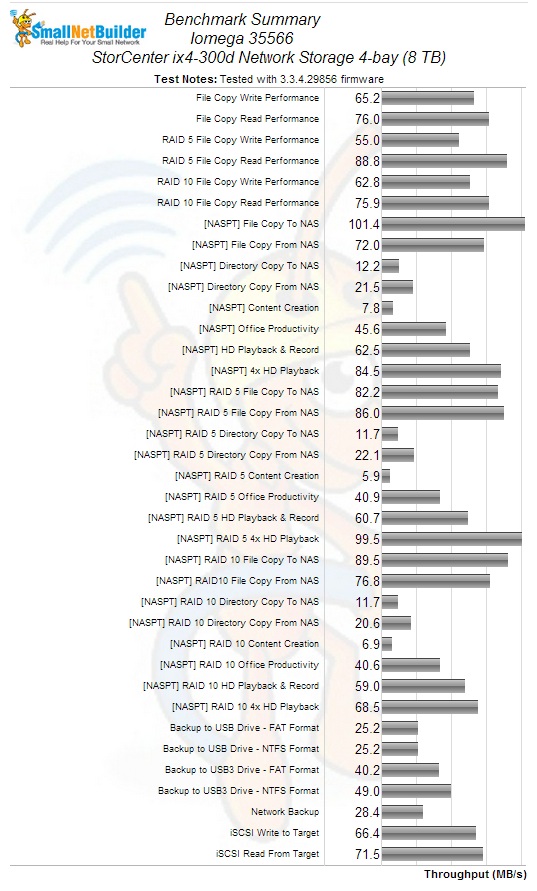

The Benchmark summary below shows a good deal of variation between Windows Filecopy write tests. Best throughput of 76 MB/s was with RAID 10, worst was 55 MB/s with RAID 5 and RAID 0 landed in the middle at 65 MB/s. Windows Filecopy reads also had a good deal of separation, but best results of 89 MB/s were with RAID 5 and RAID 0 and 10 were the same at 76 MB/s.

Iomega StorCenter ix4-300d Benchmark Summary

Intel NASPT File copy writes were much higher than Windows file copy with 101 MB/s vs. 65 MB/s for RAID 0, 82 MB/s vs. 55 MB/s for RAID 5 and 90 MB/s vs. 63 MB/s for RAID 10. NASPT reads tracked more closely with Windows File copy results for RAID 0 (72 vs. 76 MB/s), RAID 5 (86 vs. 89 MB/s) and RAID 10 (77 vs. 76 MB/s).

iSCSI performance of 66 MB/s for write and 72 MB/s for read placed the ix4-300d in mid-chart for all four-drive NASes compared.

Attached backup tests had to be hand-timed due to log time-stamps with only one-minute resolution. Since there is no built-in formatter, we tested only FAT and NTFS formats, with best case of 49 MB/s obtained with the test drive connected via USB 3.0 and NTFS formatted.

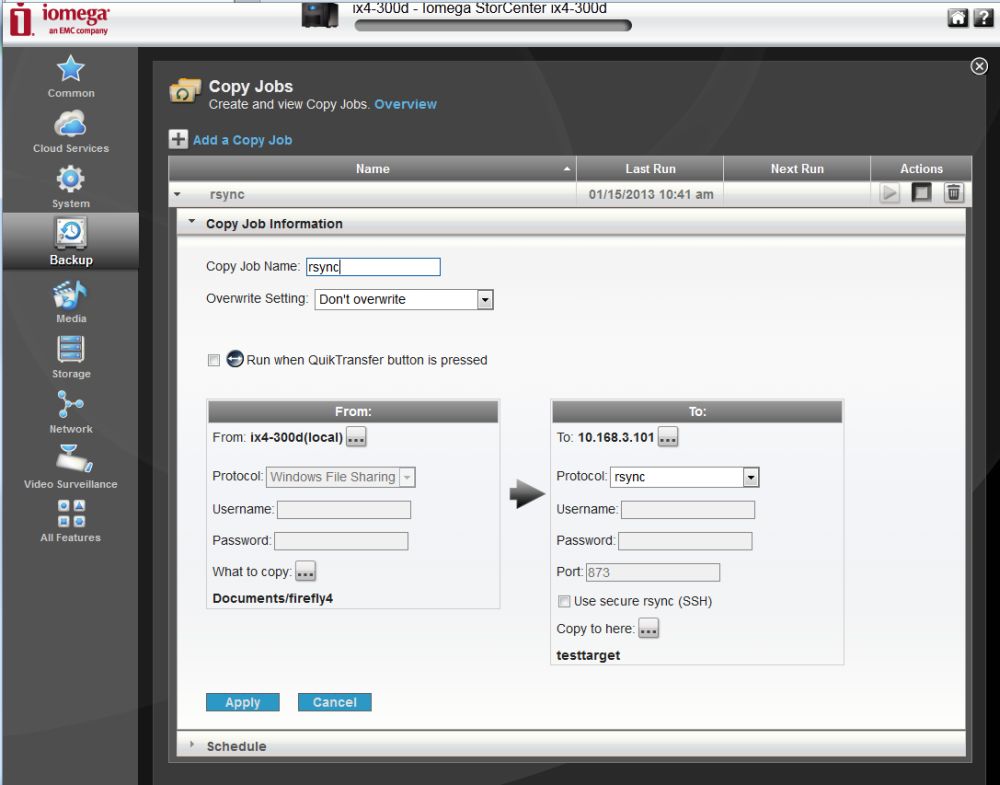

Rsync backup to the NAS Testbed running DeltaCopy acting as an rsync target came in at a ho-hum 28 MB/s. We previously were not able to test rsync backup. But this time Iomega clued us that it works only if the target rsync server has a username of rsync with no password. You don’t actually enter this on the ix4, because you can only enter login credentials if you enable SSH rsync. The screenshot below may help to explain this a little better. You also need to enter the IP address of the rsync server in the To: box. The ix4’s network browser won’t find it.

Iomega ix4-300d rsync configuration

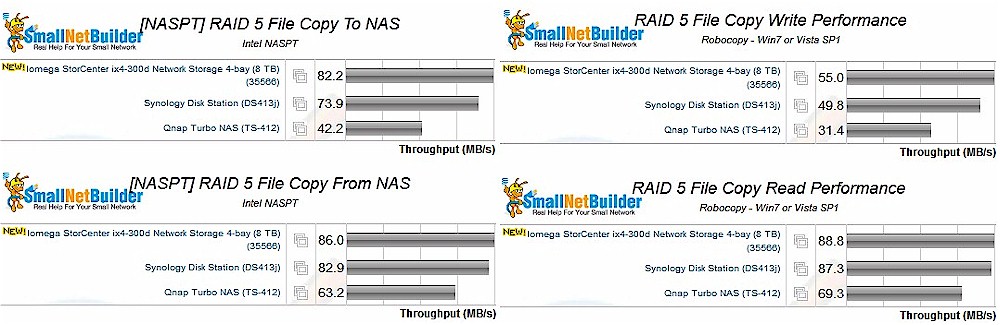

Because of the change in test process, we can’t directly compare the -300d to its ix4-200d predecessor. So we compared it to other Marvell-based 4-bay NASes that were tested with the current benchmark methods, namely the Synology DS413j and QNAP TS- 412.

The plot composite below shows Windows and NASPT RAID 5 file copy write and read results for the group. This is first time since we’ve been doing these composite comparisons that the rankings came out the same in all four benchmarks. So Iomega’s choice of the Armada SoC looks like a good move since it takes top place in all four benchmarks.

RAID 5 File Copy Performance Comparison

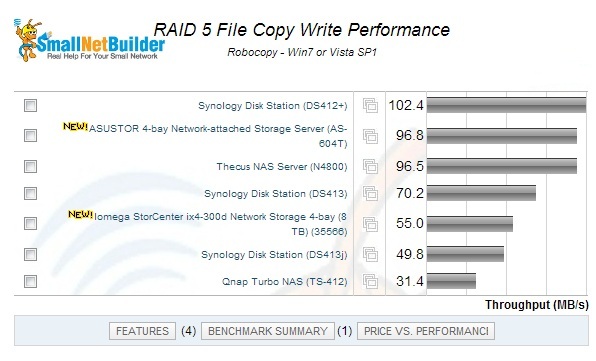

For fun, now let’s compare the ix4-300d against some Atom-powered products. Remember that some Intel-based NASes without any drives cost about as much as the Iomega with 4 2 TB drives.

Iomega StorCenter ix4-300d RAID 5 File Copy Write Comparison against Intel models

For RAID 5 Windows File Copy…ouch! The ix4-300d has about half the throughput of the Synology DS412+. But, with loaded with four Seagate Barracuda 7200.14 2 TB, drives, the Synology is about 40% more expensive than the Iomega.

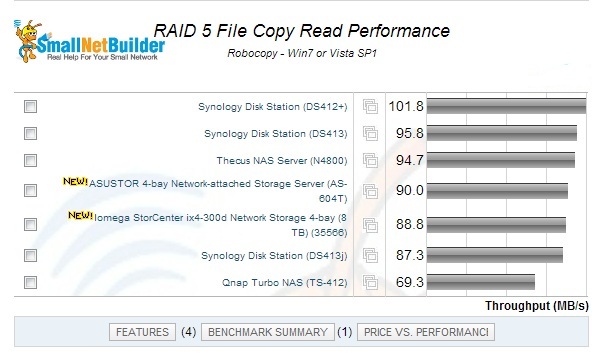

For RAID 5 Windows File Copy Read, however, the 300d fares much better, lagging behind the new ASUSTOR AS-604T by only a few MB/s. Not too shabby. But there is still a 20 MB/s gap between the 300d and the top-ranking Synology DS412+.

Iomega StorCenter ix4-300d RAID 5 File Copy Read Comparison against Intel models

Use the NAS Charts to further explore performance.

Closing Thoughts

The ix4-300d is a higher-performing replacement for the ix4-200d and has current street pricing half as much as its 8 TB dual-core Intel Atom based px4-300d sibling ($780 vs. $1450). You don’t have to pay such a high premium to step up to an Atom-based NAS, however. You could do better for performance, features and price with the top-ranking Atom-based Synology DS412+, currently street-priced around $625 and adding in $384 for four equivalent Seagate 2 TB drives.

But if your budget doesn’t allow for the Intel premium and you’re just fine with a single-volume NAS, Iomega has finally brought its performance level up to where you can focus your decision on features.

Buy StorCenter ix4-300d Network Storage 4-bay (8 TB) from Amazon

Buy StorCenter ix4-300d Network Storage 4-bay (8 TB) from Amazon