"Gigabit" internet connections, which have been common in Asia for years, are now more common in Europe and the U.S. But along with the proliferation of these fast paths to the internet have come challenges. Readers have been reporting that routers our benchmarks have shown to support wire-speed gigabit throughput have speeds nowhere near that in real life. So I set out to find out what’s up.

The Secret

Most of today’s routers default to using cut-through forwarding (CTF) aka NAT acceleration, aka cut-through switching. This technique drastically reduces latency (and increases throughput) by forwarding a frame as soon as the destination address is received.

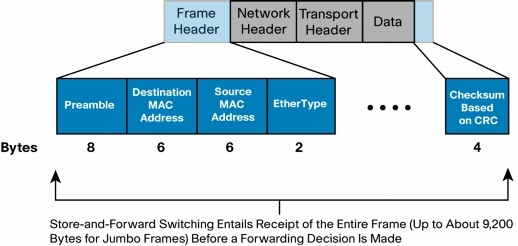

The diagram below from a Cisco whitepaper shows the destination address is part of the first-received frame header. Since the frame is forwarded after as few as 6 Bytes are received vs. 1518 Bytes for a full Ethernet frame (or up to 9200 Bytes for a Jumbo frame), latency can theoretically be reduced by a factor of almost 70X!

Ethernet frame detail

(courtesy Cisco whitepaper "Cut-Through and Store-and-Forward Ethernet Switching for Low-Latency Environments")

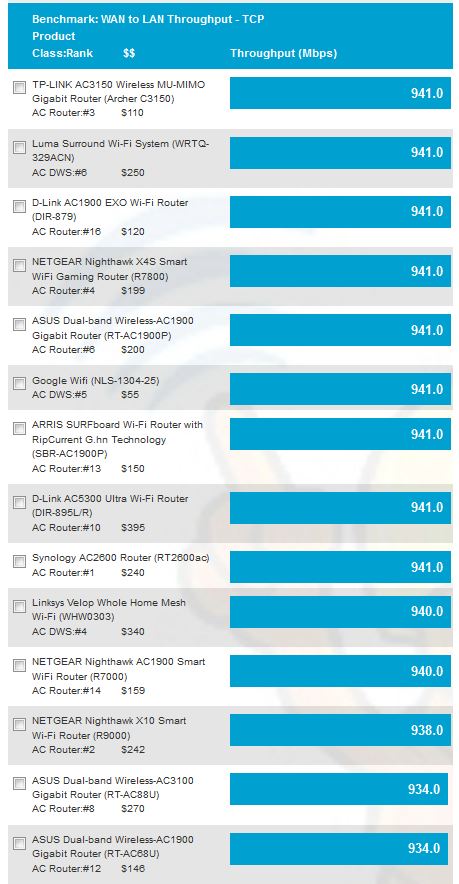

The effect of CTF is clearly shown in our wired router performance benchmarks, where 941 Mbps has become the test result for most routers tested. This result, which is just shy of the maximum for a Gigabit Ethernet connection including TCP/IP overhead, has become such a common result as to make our unidirectional benchmarks unhelpful in router selection.

WAN to LAN routing throughput – Version 9 process

What’s worse is many people with gigabit internet connections are reporting throughput much less than half these results. Clearly, CTF is being somehow disabled, but under what conditions?

The Tests

I set up a simple testbed using two computers running iperf3, the latest version of the popular network bandwidth benchmark tool. Part of the improvements built into iperf3 is that the defaults have been changed to automatically provide an accurate view of throughput without having to mess with TCP/IP window or buffer sizes or mss settings. A reverse mode has also been added to make it easier to run up and downlink tests without having to swap iperf client and server around.

Among the results for a "cut through forwarding" search on Google are references to port forwarding not being compatible with CTF on some products. So the test configuration placed the iperf3 server on the router LAN side and the client on the WAN side. This configuration requires forwarding TCP port 5201 through the router under test’s firewall. So all tests were run through a forwarded port.

I tested five routers, three with different processors and two with the same. iperf3 was run using TCP/IP for 10 seconds with all the defaults. The defaults use a 128 KB read / write buffer length and MSS of 1460 bytes. iperf3 doesn’t report window size used and I could not find it in the documentation. But since the defaults produced maximum TCP/IP throughput, I didn’t mess with them.

| ASUS RT-AC88U | Linksys WRT1900ACS | NETGEAR R7800 | NETGEAR R8500 | Ubiquiti EdgeRouter Lite | |

|---|---|---|---|---|---|

| Processor | Broadcom BCM4709C0KFEBG dual-core @ 1.4 GHz | Marvell Armada 38X dual-core @ 1.6 GHz | Qualcomm dual-core IPQ8065 Internet Processor @ 1.7 GHz | Broadcom BCM4709C0KFEBG dual-core @ 1.4 GHz | Cavium CN5020 dual core @ 500 MHz |

| F/W Rev | 3.0.0.4.380_7266 | 1.0.3.177401 | 1.0.2.28 | 1.0.2.94 | 1.9.1 |

| Downlink (Mbps) | |||||

| Default | 941 | 940 | 940 | 941 | 940 |

| QoS | 902 | 936 | 937 | 940 | 119 |

| Traffic Meter | 935 | – | 940 | 664 | – |

| Keyword Block | – | – | 941 | 549 | – |

| Parental Control | 939 | 940 | – | – | – |

| Uplink (Mbps) | |||||

| Default | 940 | 822 | 941 | 940 | 938 |

| QoS | 331 | 791 | 936 | 915 | 138 |

| Traffic Meter | 415 | – | 941 | 566 | – |

| Keyword Block | – | – | 941 | 566 | – |

| Parental Control | 406 | 785 | – | – | – |

Table 1: Test summary

Since routers vary in supported features, I couldn’t run all tests on all routers; the "-" in the table indicates tests I could not run. For QoS tests, I manually set up and downlink bandwidth to 1,000 Mbps and used automatic, adaptive or "smart" modes. If the QoS GUI allowed, I set the test client as the prioritized client.

For "parental control" testing, I just enabled the Linksys’ Parental Control feature. For the ASUS, I enabled Parental Control and checked all four top category boxes, i.e. Adult, IM, P2P and Streaming.

You’ll be happy to know that port forwarding did not reduce either up or downlink throughput. The one possible exception is the Linksys WRT1900ACS’ 100 Mbps lower uplink throughput. But I didn’t check whether that was due to port forwarding.

The results also show uplink throughput was reduced more often than downlink.

Fans of Ubiquiti’s EdgeRouter Lite may be disappointed to see the huge throughput reduction with Smart Queue QoS enabled in both directions. However, this result appears to be confirmed by many posts in the Ubiquiti Community forum.

The only product that showed no reduced throughput in each test case was the NETGEAR R7800, powered by a Qualcomm processor.

Closing Thoughts

In addition to the modes tested, internet connections requiring almost any sort of encryption can also significantly reduce throughput. These would include PPPoE, L2TP and PPTP connection types. Unfortunately, I can’t test with any of those connection types.

And, of course, if you’re trying to get a full gigabit through any VPN tunnel, you can forget using any consumer Wi-Fi router. Higher encryption levels mean lower throughput. So even with quad-core ARM processors and lower encryption levels, you’ll be hard pressed to find anything that can provide higher than 50 Mbps through a VPN tunnel. For anything higher than that, you’ll need to build your own router with a PC grade multi-core CPU.

However, this little exercise has shown I need to expand SmallNetBuilder’s router testing to move beyond default configurations and do some poking to see if commonly used features knock down throughput. Look for testing like this to be included in the new router test process that is coming soon.