Introduction

| At a Glance | |

|---|---|

| Product | Mvix MvixBOX (WDN2000) |

| Summary | Dual-drive BYOD NAS with web-portal features |

| Pros | •Attractive UI •Extensive sharing protocols supported • Community Portal features (Blogs, Messaging) |

| Cons | •Buggy •Low performance |

I’ve written over 60 Network Attached Storage (NAS) articles over the last few years, and after awhile, the software on the boxes all start to look pretty similar. A typical unit is running Linux internally and has a web-based administration menu for setting up the network, adding shares, adding users, managing drives, etc. But every now and then you come across a product where the manufacturer is taking a bit of a different approach in defining what a NAS should be. In this review, I’m going to check out such a NAS: the MvixBOX from Mvix.

Hardware-wise, the MvixBOX is a two-SATA bay, RAID-capable, Bring Your Own Disk (BYOD) NAS with Gigabit Ethernet support (but without jumbo frames) and two external USB ports for additional storage.

As you can see above, the device is a bit chunky looking with a row of blue LEDS on the front panel that are bright enough to make you think that you left the lights on in your computer room at night. One of the USB ports is located on the front, paired with a one-touch button for copying data from the external drive to internal storage. On the back (Figure 1) you can see there’s another USB port, the Gigabit Ethernet connector, and trays for disk installation.

Figure 1: MvixBOX Back Panel

You can’t really tell it from the pictures, but the main unit sits in a cradle that raises it up to allow airflow to a fan vent on the bottom. When running, I judged the fan to be a bit on the noisy side. As far as power draw, with two disks installed I measured it to be about 18 Watts when active and 15 after sitting awhile (although there is no disk spin- down configuration option). Along with Ethernet, Mvix advertises support for USB wireless dongles, but they don’t seem to document which models are supported (more on this later).

Setup

To get the Box going, you’ll need to supply your own disks. Figure 2 shows the Box with one disk tray slid halfway out.

Figure 2: Disk Installation

In general, the unit felt solid and the use of 3.5" SATA disks made installation straightforward and easy.

Mvix documents support for Windows, Mackintosh (sic) and Linux users. But that’s after the NAS has been set up for the first time because the installation software is Windows-only. This is one area where Mvix had taken a different path than other manufacturers. Most NASes allow you to boot up and then set everything up via a web interface, but not this one. You are required to initialize the NAS via the installation software that comes on the included CD, which is a downside if you’re an Apple or Linux user.

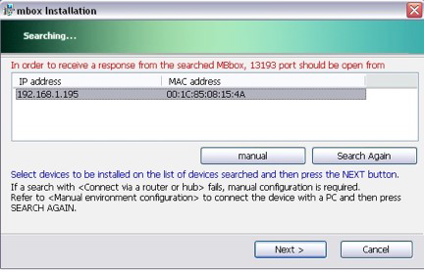

When the box boots up for the first time, it acquires an IP address via DHCP. But if you don’t have a DHCP server on your network, Mvix documents a way to set the device up using a direct-connect Ethernet cable. Figure 3 shows the initial setup screen where the Box has been located on the LAN.

Figure 3: Initial Setup

And as you go through the setup, other things start to stand out as a bit different than the typical NAS. First is the requirement to locate and enter a serial number that’s located on the bottom of the product. I rarely see a requirement to tie the physical box to the software being installed.

Then there are two long license statements that you are required to agree to before installation. One was fairly standard, but the other was for signing up for an online DNS registration type service associated with the product. That agreement had a number of restrictions with typical language (although often in broken English) about uploading and downloading illegal or copyrighted content,.

But one sentence jumped out at me. Evidently by accepting the license, I agreed not to upload a “death-gui”. Now this made me a bit worried. Some of the Graphical User-Interfaces I’ve developed over the years have been pretty bad. But I don’t think any of them were overly lethal. So maybe they wouldn’t be qualified as a death-gui and I won’t be violating the license agreement. Time will tell.

Moving on through the rest of the Windows installation utility, you’ll find a number of standard setup options such as network configuration, passwords, etc. One curious problem I had was related to setting the Time Zone. During the course of preparing this review, I set it numerous times, only to find out later it was set back to Seoul time.

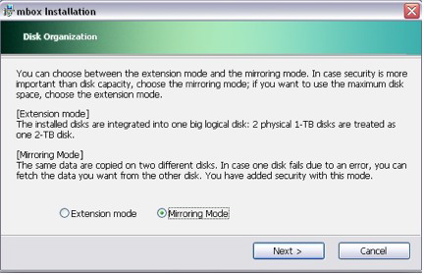

Figure 4 shows the menu where an initial RAID selection is made.

Figure 4: Initializing RAID

You can see in this case, I’ve selected RAID level 1, mirroring, which should get me the ability to recover from a single disk failure. The other supported "Extension" mode is actually RAID 0.

Feature Tour

Once the initial setup is completed under the Windows installation utility, you can move on to the web-based interface for additional configuration and maintenance. Figure 5 shows the web interface once I had logged in as an administrator.

Figure 5: Web Interface

In general, I found the interface used in the box to be attractive with some “Web 2.0” AJAX functionality where changes can be made without a complete page reload. Although the Interface looked nice, seeing titles such as “Manager Establishment Information Changing”, shows that Mvix could use some help in their translation department (Mvix is an Korean company).

The picture in the upper left of this screen (no that’s not me!) was the default photo but each user can change it to whatever they want. After a while I found this default image a bit creepy so I looked closer and found that the name of the file used was “con_l_man.jpg”, a curious choice for the default administrator image!

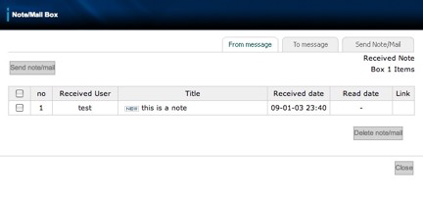

I won’t have space here to go into detail on all of the options from this menu, but I’ll touch on some of the more interesting ones. First, the web interface is designed to support both normal and administrative users, which is a little bit unusual. Typically the interfaces on NASes are just used for configuration purposes, but this box is a bit different. Mvix seems to be targeting the MvixBOX toward those who want to have a Portal with a community of users. There are options for each user to create and customize their own Blog, for example. Users can also send notes to each other (Figure 6), and they can communicate through a built-in message board.

Figure 6: Note Capabilities

When sending notes, users can even pass references to files for sharing. And speaking of sharing, the box also has Torrent and RSS capabilities, but when I tried out this feature (Figure 7), I ran into trouble.

Figure 7: Torrent and RSS

Every time I would try to start up a Torrent, the web interface would seem to lock up. I could still access shares on the box, but the web interface would be unresponsive so I had no choice but to reboot.

When a normal user is logged in, the administrative options such as user and group management (Figure 8) are grayed out.

Figure 8: Group Assignment

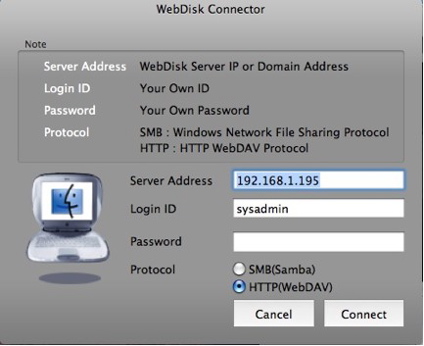

As seen in the lower-left corner of the screen back in Figure 5, both normal and administrative users get Windows and MacOS buttons that will download little utilities for connecting to the Box. Figure 9 shows the MacOS version of the utility.

Figure 9: Webdisk Connector

From this menu, you can see that choices are available for both SMB and WebDAV connections, but not AFP or NFS, which the MvixBOX also supports. This utility worked fine for me, but it wasn’t clear why a separate utility was needed, since both of these protocols, plus NFS and AFP, are supported natively in OS X’s “Finder”.

Digging into the administrative menus, you’ll find options for setting up users, groups, network parameters, quotas, time, etc. One unusual feature I noticed as I went thorough the menus was user specification. Any time a user needed to be specified, there was a “search” option to help find the user name or ID. This implied that the designers were expecting the administrator of the box to be managing many users, which once again shows the community-of-users focus of the product. There were also options for generating temporary “guest” users, whose accounts would be time-limited.

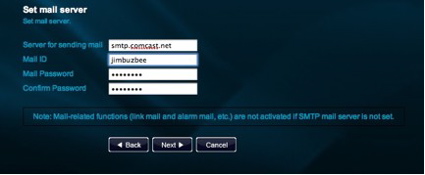

There was also an option for setting up email parameters so the Box could send out alerts and status messages (Figure 10), but it wasn’t able to use my settings.

Figure 10: Email Setup

You can see in this menu, that there are no options for non-authenticated email servers, or for using alternate ports, or SSL-enabled servers, etc. I find this is common for these products. They just don’t have enough flexibility when setting up SMTP, with the result being that they are unable to send out email.

The MvixBOX does have a lot of flexibility, however, when setting up network services. Support is present for a number of different protocols such as SMB, NFS, AFP, WebDAV, FTP, print serving and even iTunes serving. And for those wanting a command line, I was happy to see that the MvixBOX even has the ability to turn on SSH.

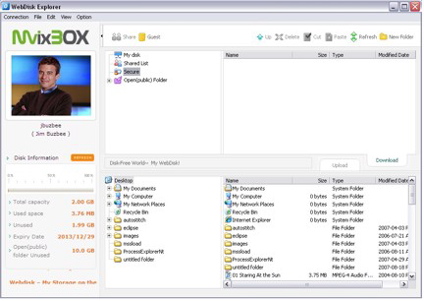

For Windows users, there’s an “Explorer” feature (requiring an Active X plug-in) that lets you treat the Box more like a local resource from your browser (Figure 11).

Figure 11: WebDisk Explorer

This seemed a bit duplicative for local users, but it might help remote users who can’t directly mount shares on the Box. There is also a Windows-only backup software capability for backing up your data to the MvixBOX, but I found no option for backing up the disks on the MvixBOX itself.

RAID Fail Test

I test all RAID-capable NASes to see how they perform when a drive "dies". In this case, I had already set the Box up for RAID 1, so I should be protected against a single disk failure. To see how it worked, I yanked out a drive while everything was up and running.

The first thing I noticed was… nothing. I heard no warning beeps. I saw no LED change. Since RAID 1 transparently handles disk-failure, all of my data was available just as before. But when I logged in as an administrator, I saw no warnings or anything in the internal notes display.

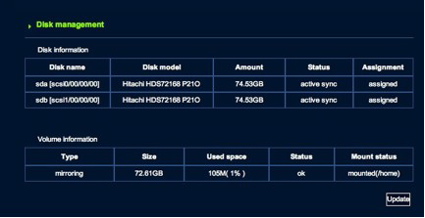

If the box had a logging feature, which it doesn’t, it might have shown me something. And since my email setup failed, I received no email alert. Even when I dug down into the disk status menus, everything was shown as normal (Figure 12).

Figure 12: Disk Status

I almost went back to the Box to see if I really did pull the drive! But when I finally hit the “Update” button shown in Figure 12, only then did I get an indication that something was wrong. It took awhile, but eventually I got an error indicating that the display couldn’t be updated. It didn’t tell me that a disk had failed, it only said it couldn’t update the display. Very odd. So the only option I had was to reboot.

When the Box came back up, once again I had no indication that anything was wrong; I just had one fewer disk. To see if I could recover, I shut down, replaced the drive and booted back up. This time, the “Status” display from Figure 12 indicated that a recovery was underway, with a percentage-done display. At least that was automatic.

I let the recovery run overnight, and in the morning I logged in and still had a “percentage-done” of 2% and I thought it was stuck. But then I found that the user is responsible for updating the display by hitting the “Update” button. Just reloading the page or re-logging in doesn’t do it. The display is static until you manually hit the “Update” button. Odd.

The recovery is also quite slow. With my little 80 GB drives, after running all night, I was only 57% compete. Who knows how long it would take with a pair of 1TB drives? Mvix has some work to do with the whole RAID-recovery process.

Performance

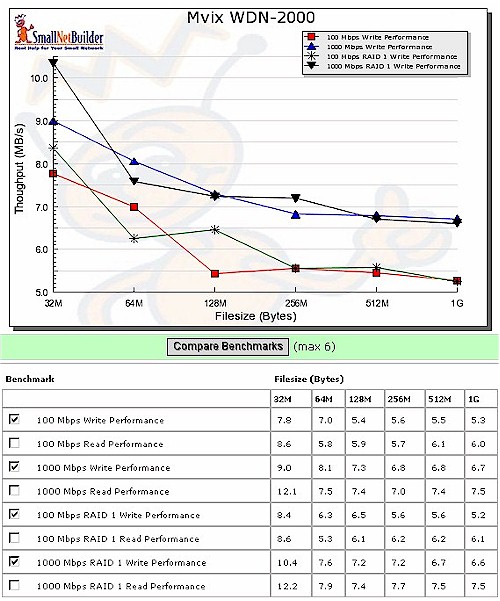

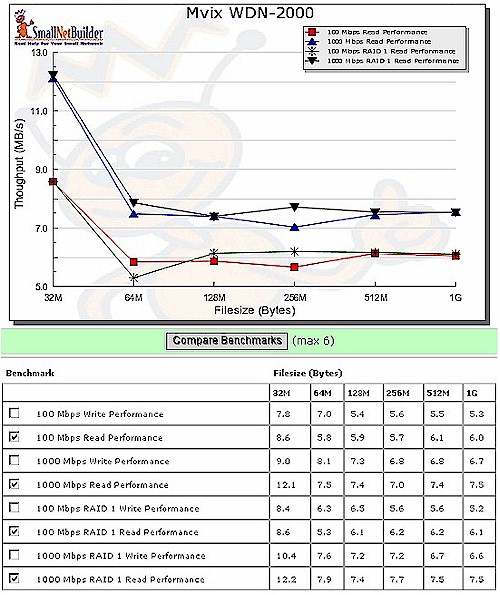

We used IOzone to test the file system performance on the Box (the full testing setup and methodology are described on this page). I tested with 1.0.0.4 firmware in RAID 0 ("Extension") and RAID 1, with 100 Mbps and 1000 Mbps LAN connections.

Figure 13 shows a comparison of the Box’ write benchmarks. It’s nice to see that performance is higher when using a Gigabit Ethernet connection and that you’re not giving up any speed by using RAID 1. But average write throughput for the large file sizes from 32 MB to 1 GB is only 7.4 MB/s, which ranks the Box at the very bottom of the 1000 Mbps Write charts when you filter for dual-drive products.

Figure 13: Write benchmark comparison

Figure 14 shows the read benchmarks compared, which are evenly matched to write performance. Average read throughput for the large file sizes from 32 MB to 1 GB is 8.2 MB/s, which, while a bit better than write, still ranks the Box at the very bottom of the 1000 Mbps Read charts when you filter for dual-drive products.

Figure 14: Read benchmark comparison

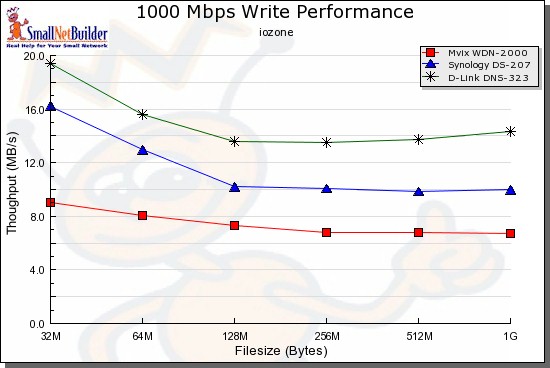

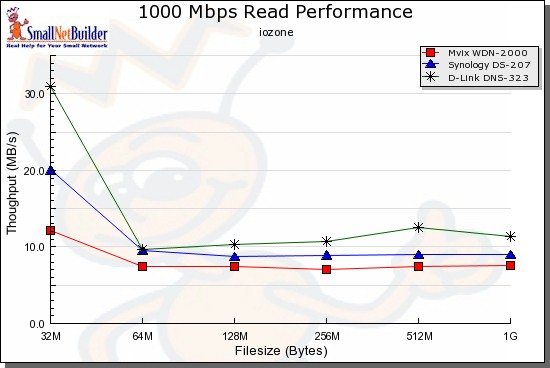

You can choose your own products to compare using the NAS Charts, but I tried out a couple of other lower-end devices to give you a head start. I chose the D-Link DNS-323 and the Synology DS207. Note that both of these other NASes support jumbo frames, which the MvixBOX doesn’t. So the following charts are without jumbo frames.

In the write test (Figure 15), you can see that the MvixBOX gets badly beaten with the D-Link DNS-323 being more than twice as fast in most cases.

Figure 15: Comparative write test – 1000 Mbps LAN

For the read comparison shown in Figure 16, the relative rankings remain the same, but performance is much more closely matched.

Figure 14: Comparative read test – 1000 Mbps LAN

Under the Covers

I couldn’t easily get into the box to take pictures of the motherboard. But Mvix documents that the processor is an ARM-based StorLink SL3516, with a Realtek RTL8211C for Ethernet, 64 MB of RAM and 8 MB of flash.

SSH is supported, I could easily log in to poke around a bit more. I found a 2.6.15.7-h1 Linux kernel with drivers for the Ralink RT73 family of wireless USB dongles. So keep that in mind if want to try this feature out. I also saw that my box contained 128 MB of RAM instead of the 64 MB that Mvix documents.

I was curious about the lockup I had when I attempted to use the Torrent features, so I watched the system while I tried again, and was able to see the problem. Before I tried out the feature, I could see that there were seven instances of Apache running. But after hitting the Torrent web page, I had 52!

A quick check of the Apache error log showed the following message: “server reached MaxClients setting, consider raising the MaxClients setting”. That explains why the Box was still running, but no longer serving web requests. Evidently Mvix has a bug here that needs to be addressed.

One advantage to having SSH access was that I could then interactively restart Apache instead of having to reboot the whole box. That’s why I really appreciate it when manufacturers supply this feature, at least for administrators.

Poking around a bit more, I found a reference to “Gluesys” and a search of their web-site turned up a nearly identical product, with that same “Con Man” picture so Gluesys may be the ultimate OEM of this product.

As far as other utilities in use, along with standard Linux components, the iTunes support was via mt-daapd (now called firefly), and PHP and MySql were being used for the Blog feature. With all of this GPL licensed software on the product, you would expect to see source code availability as required by the GPL license, but I could find no reference. Mvix’s web site had GPL downloads for a different product, but not for the MvixBOX. Hopefully this is just an oversight on Mvix’s part that will be corrected soon.

Closing Thoughts

This was an interesting little box. The “Community” focus of this device was unusual for a home-based NAS and could be appreciated by those wanting to set up message boards, Blogs, and shared resources for a group of users. But the bug I turned up with BitTorrent was a show-stopper if that’s a feature you need. Hopefully Mvix will get a fix out soon.

Mvix also has some work to do with respect to RAID recovery, but at least it did come back from the simulated failure. The performance of the product was on the (very) low end for this class of NAS, so that might be a concern if you really intend to host a lot of users simultaneously or if you want to be moving a lot of data around.

As compared to similar low-end products such as the D-Link DNS-323 or the Synology DS207, you’ll have to do some trade-offs. The D-Link is cheaper, but it lacks USB expansion capabilities and has a smaller software feature-set. And the Synology is higher-priced and has a different focus than the MvixBOX.

So as usual, it depends on what your needs are. If you’re primarily looking for high performance, keep looking. But if you want to set up a little server with a Portal geared toward a community of users, the MvixBOX just might be a good fit.