Introduction

In What’s Missing From Your Wi-Fi 6 Router? OFDMA back in January, I said that OFDMA was beginning to look at lot like MU-MIMO. Since then, I’ve continued to work on developing OFDMA test methods, trying different approaches, as you’ll see shortly.

The bottom line is I still can’t consistently find evidence of the promised benefits of improved airtime efficiency, higher total bandwidth use with multiple devices and lower latency.

Testbed V2

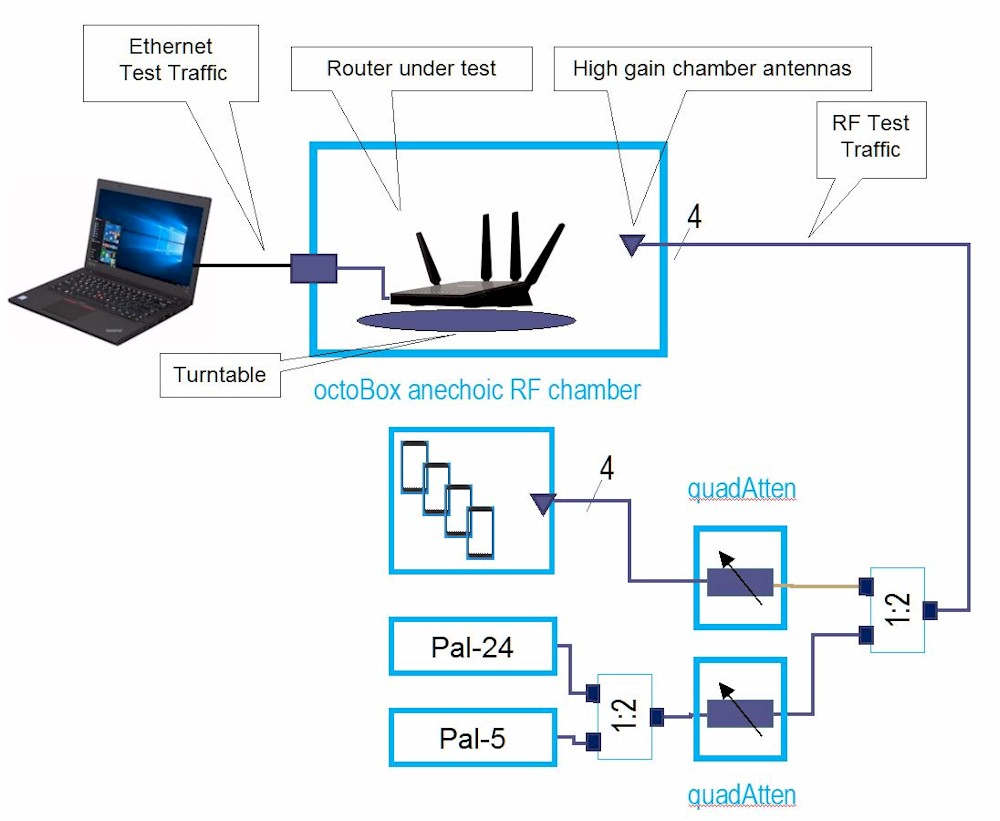

In the previous article, the testbed STAs consisted of an octoScope Box18 with four Samsung S10e smartphones inside. Four octoScope high-gain dual-band antennas capture RF and a four-channel 90 dB range programmable attenuator (quadAtten) attenuates signal level.

The quadAtten feeds into a four-channel two-way RF splitter/combiner, whose second port connects to octoScope Pal-24 and Pal-5 partner devices that have their own attenuator. This provides the option of adding 2.4 GHz b/g/n and 5 GHz a/n/ac STAs into the test mix. The splitter connected to four octoScope high-gain dual-band antennas in a 38 inch octoScope box containing the device under test (DUT).

OFDMA testbed – first attempt

The test method measured total UDP throughput for the four phones with OFDMA enabled and disabled. In the end, this approach, UDP traffic with small packet sizes, which was suggested by Broadcom, didn’t produce significant or repeatable throughput gains.

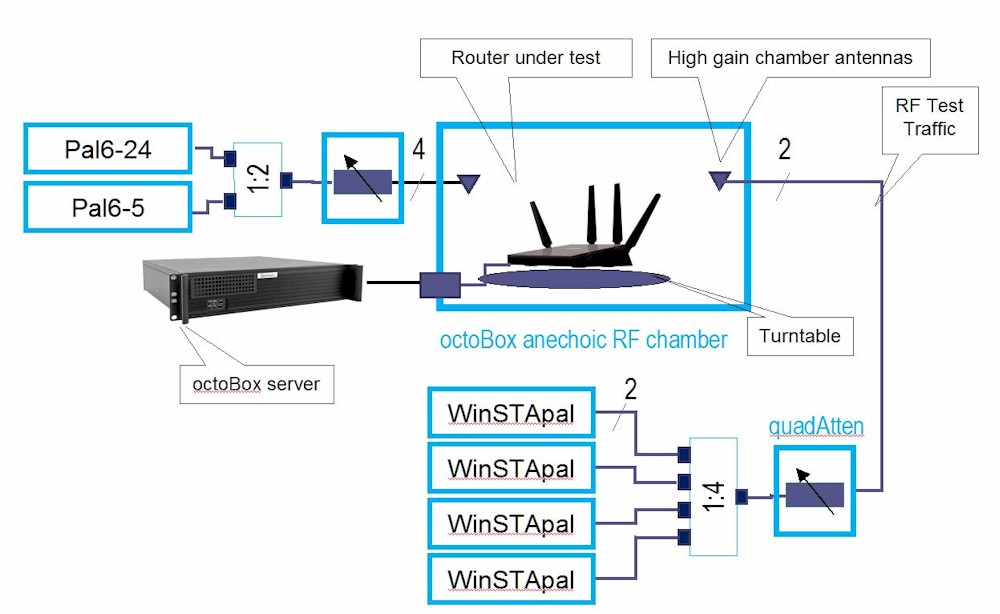

This time, I decided to focus on measuring latency, to try to verify the claim that OFDMA significantly reduces it. The new testbed uses four octoScope STApals. These are teenie ASUS pico-ITX format computers, hosting an Intel AX200 Wi-Fi 6 station card. The STApal comes in two versions, one based on Ubuntu Linux, the other running Windows 10 Pro. The Windows STApal version was chosen because its AX200 driver at time of this test has better up and downlink throughput performance than the Linux driver. (octoScope is working to correct this.)

OFDMA testbed – version 2

The testbed also has an octoScope Pal6. It can be used to add traffic load, but I use it to monitor channel congestion. More on that later.

The test is run by a Python script using the octoScope octoBox server platform to run the test and report results. octoScope’s multiperf traffic generator (using iperf3 at its core) provides traffic generation.

The basic test approach was inspired by the Make Wi-Fi Fast Project’s RRUL (Realtime Response Under Load) bufferbloat test tools. Specifically, I used Flent‘s rtt_fair_var test ported to the octoScope test platform. All initial explorations, however, were performed using Flent natively, with a lot of help from Flent’s creator, Toke Høiland-Jørgensen. (Thanks, Toke!)

As an additional thanks to Toke, here’s a shameless plug and link to his PhD thesis "Bufferbloat and BeyondRemoving Performance Barriers in Real-World Networks". It goes into more detail on Flent and Toke’s work on "ending the wifi anomaly"

Flent uses netperf, not iperf/iperf3 as its primarly traffic generator/benchmarking tool. Netperf is (relatively) easily installed on Linux systems (you need to compile it), but not on Windows. Long story short, I ended up having Windows binaries of netperf and netserver created (download a zip of both files).

The rtt_fair_var test is a variant of the Realtime Response Under Load (RRUL) test that forms the heart of Flent’s test suite. For each test STA, the test simultaneously runs a ping at 200ms interval to measure round trip time (latency) and unlimited bandwidth TCP/IP traffic using netperf to flood the connection with traffic.

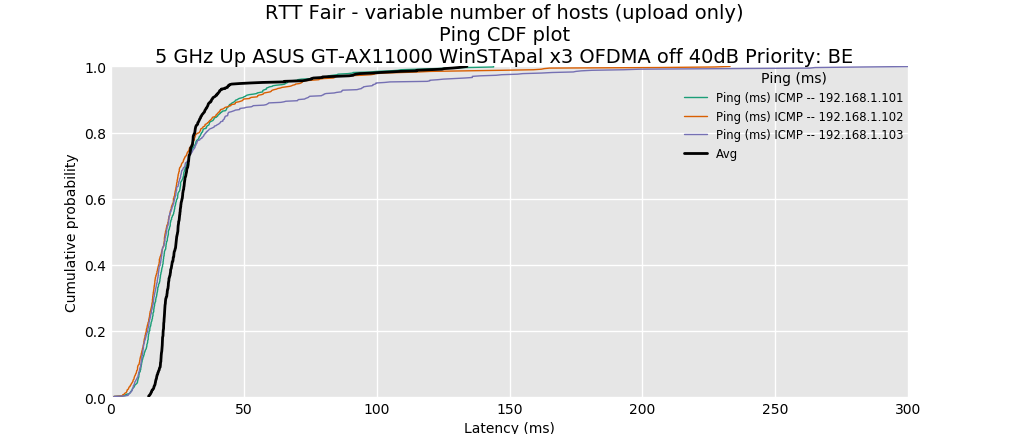

Shown below are some typical results from early tests using Flent. The DUT is an ASUS GT-AX11000, which at the time supported OFDMA for 5 GHz DL (download) traffic only. The first plot is the result with OFDMA off…

Flent RTT Fair test – CDF latency plot – BE priority – OFDMA OFF

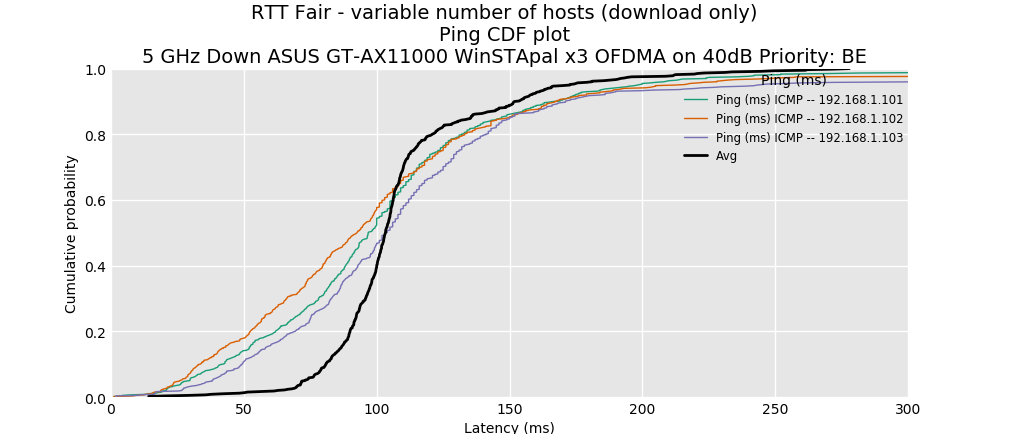

…and the second plot is OFDMA on. A CDF (cumulative distribution function) plot shows the probability that a quantity (in this case ping or RTT) will be less than or equal to a value. 80% (0.8 on the graphs) is typically used as a benchmark, so we see that with OFDMA off average ping time for all three traffic pairs 80% of the time will be 30 ms or lower, increasing to ~ 120 ms or lower with OFDMA on. Not the result we’re looking for!

Flent RTT Fair test – CDF latency plot – BE priority – OFDMA ON

Got OFDMA?

While consumer router manufacturers are slowly slipping OFDMA into their products, most Wi-Fi 6 products still don’t enable OFDMA. Of those that do, only a few products support both DL and UL OFDMA on either 5 GHz or both bands.

I check most frequently with ASUS and NETGEAR for updates on OFDMA support. I’ve given up checking with TP-Link. Every time I’ve asked, the reply has been they still don’t support it on any of their Wi-Fi 6 products. D-Link is shipping only the DIR-X1560, which supports OFDMA DL+UL on 5 GHz only (2.4 GHz uses an 11n radio). Linksys has only its overpriced MX5/MX10 Velop AX Mesh system; I didn’t ask them about OFDMA support.

The last check with ASUS about a month ago showed that all its AX routers supported 5 GHz DL, with support for 5 GHz UL and 2.4 GHz DL/UL marked as "Y – open in coming weeks". But a tip from Merlin over on SNBForums led me to picking up an RT-AX58U (aka RT-AX3000) via curbside pickup from my local Best Buy. A quick check of its GUI showed it does indeed offer, DL only, DL/UL and DL/UL+MU-MIMO OFDMA settings on both bands.

NETGEAR’s list showed full OFDMA support (both bands, DL+UL) on its entry-level two-stream RAX15/20 and mid-market RAX45/50 routers. Among NETGEAR’s early AX routers, the RAX80, still has no OFDMA support and its only Qualcomm-based router, the RAX120, supports DL only on both bands. For those who like buying expensive tri-band routers, at least the RAX200 has full OFDMA support. And both its Orbi and Nighthawk Wi-Fi 6 mesh systems have full OFDMA support. I bought an RAX15 and RAX45 (Costco exclusive) for testing and asked NETGEAR to send an RAX200. But due to Covid-19, they haven’t been able to ship one and I’m not that flush with cash to part with the $488 Amazon is currently getting for it.

Best Case

While I was bringing up the equivalent of the Flent rtt_fair_var test on the octoScope testbed, an octoScope colleague shared some encouraging results he had gotten using a similar TCP/IP traffic plus ping test applied to octoScope’s Pal6 partner device, configured as an AX AP. So I decided to experiment using the Pal6 configured as a four-stream AX AP. All the following plots were produced by importing the CSV test result file into octoScope’s Expert Analysis tool.

The TCP/IP traffic settings for each of the four WinSTAPals that produced the following plots were:

- 50 Mbps bit rate (iperf3 -b)

- 256 byte buffer length (iperf3 -l)

- DSCP = 192 (iperf3 –dscp)

The DSCP parameter sets the traffic priority level. If you search for information on DSCP, you’ll find lots of different references that provide lots of different values to use as DSCP settings. For the octoScope system, 192 value equates to CS6 Voice priority for wireless QoS data frames. This was verified by packet inspection.

Note that when OFDMA was set to off on the Pal6, MU-MIMO (MU beamforming setting) was left enabled. The Pal6 has separate downlink and uplink OFDMA enables; both were enabled for the OFDMA on condition.

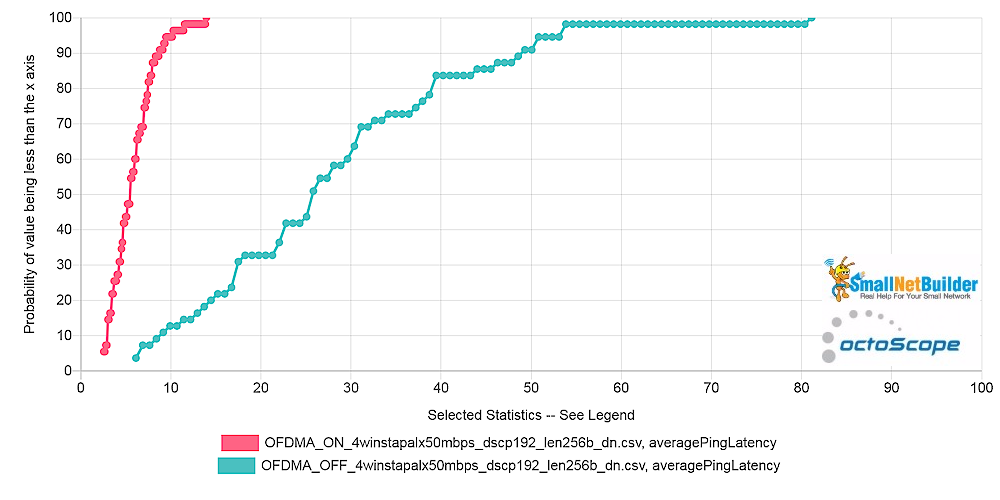

The first plot compares average OFDMA on/off latency for all four traffic pairs. There’s obviously a significant improvement; ~48 to ~ 6 ms.

Pal6 AP – Average Latency CDF comparison – downlink

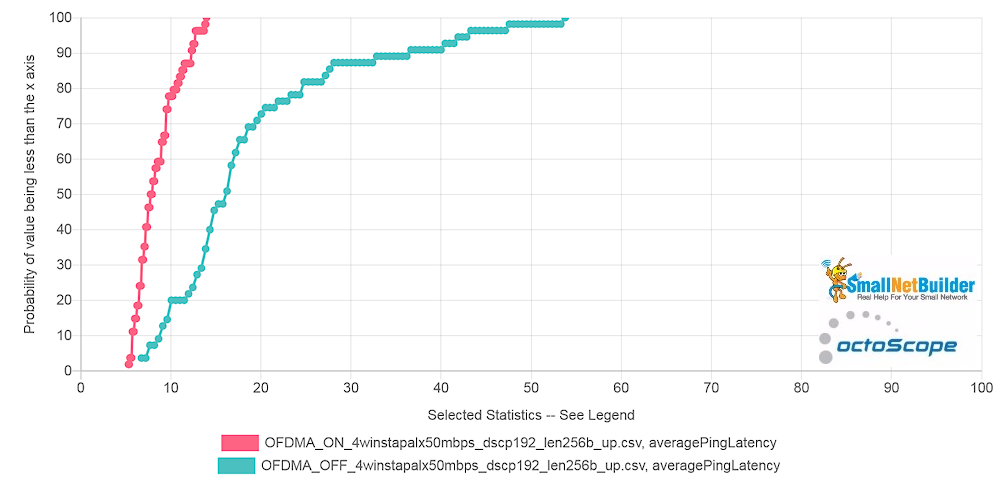

Uplink has lower latency with OFDMA off, but about the same as downlink latency with it on.

Pal6 AP – Average Latency CDF comparison – uplink

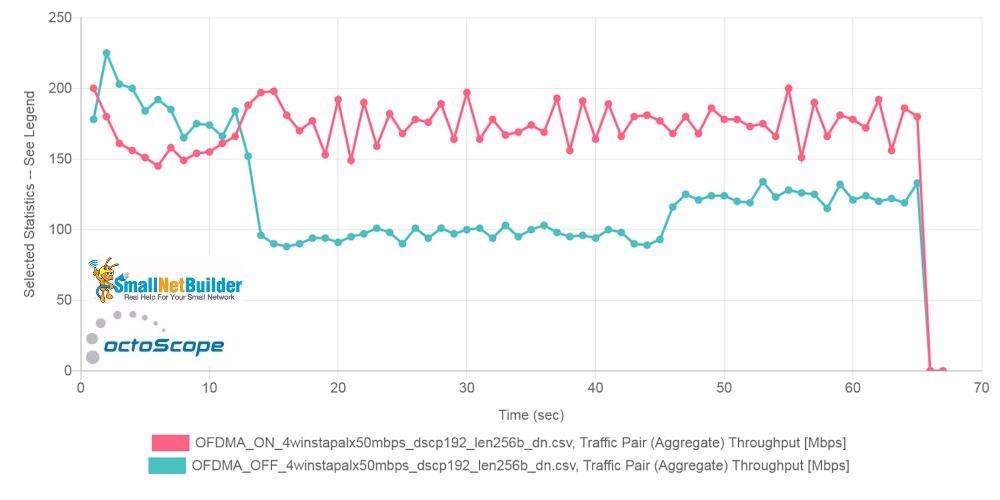

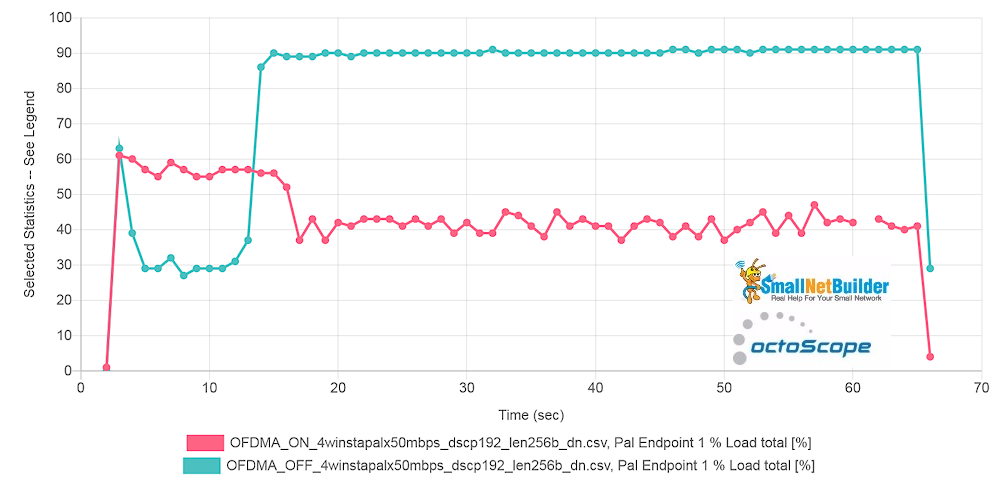

OFDMA also claims to increase aggregate throughput for multiple devices. The downlink throughput plot below appears to support that claim, too, but not at the start of the plot. This it-takes-time-to-settle behavior was seen during multiple runs.

Pal6 AP – Average aggregate throughput comparison – downlink

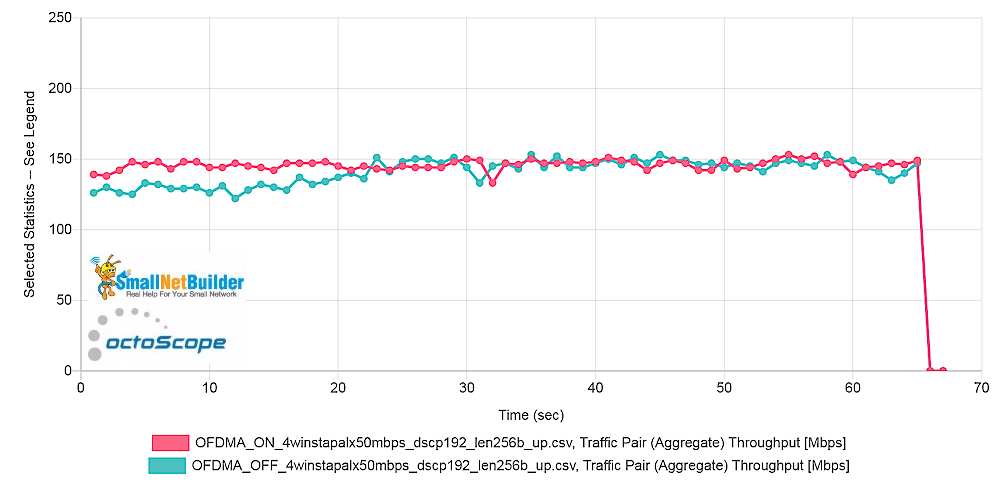

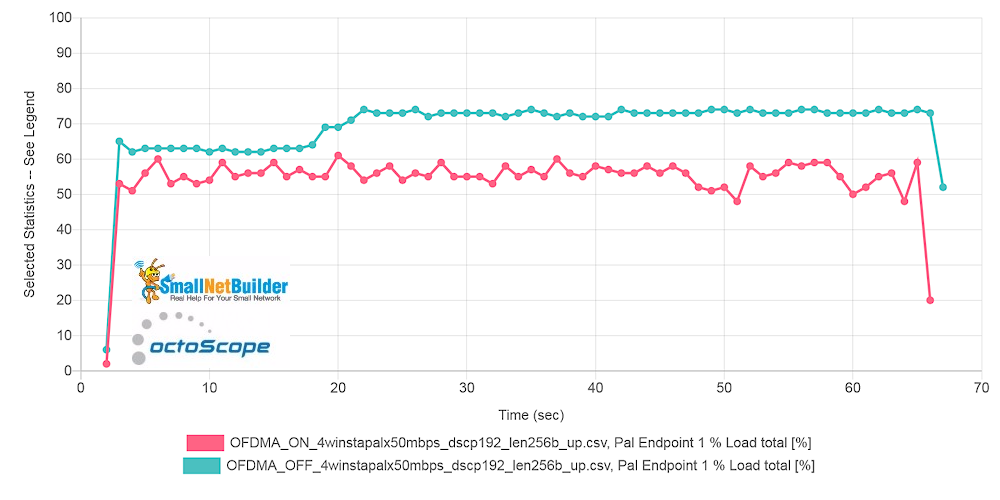

Uplink throughput shows essentially no gain from OFDMA.

Pal6 AP – Average aggregate throughput comparison – uplink

The basic tenet of 802.11ax is high efficiency; it’s in the standard’s name and is its prime directive. If AX delivers on its primary goal, airtime should be used more efficiently. Qualcomm chipsets report channel congestion and since Pal6 is based on Qualcomm’s AX "Hawkeye 2.0" chipset, we have the plot below. Congestion is reduced by over 50% with OFDMA enabled!

Pal6 AP – Channel congestion comparison – downlink

Uplink also shows lower congestion with OFDMA enabled, but the gain is not as dramatic as downlink.

Pal6 AP – Channel congestion comparison – uplink

To me, these last two plots are the most important. Because even if latency isn’t reduced and total throughput isn’t increased, reduced channel congestion means less device contention, which should result in a better Wi-Fi experience for everyone using a channel.

So it looks like we finally have a benchmark that can show significant benefit (in most cases) from OFDMA!

Unlimited Bitrate

But since the rtt_fair Flent test uses unlimited bitrate, I gave that a shot to see what would happen. All other traffic parameters stayed the same.

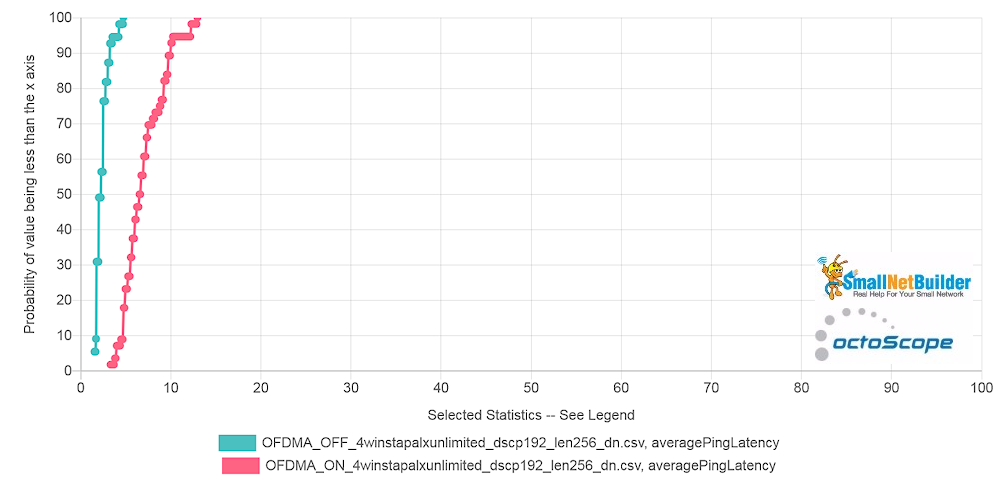

Average OFDMA on/off latency for downlink traffic isn’t very promising. While latency is lower than with 50 Mbps per STA, the change with OFDMA enabled is in the wrong direction.

Pal6 AP – Average Latency CDF comparison – unlimited throughput – downlink

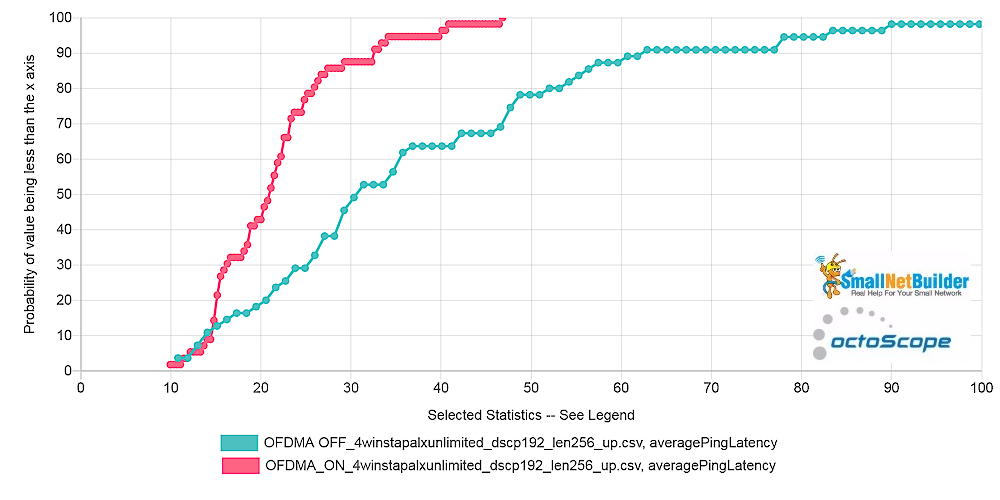

Uplink has higher latency in both cases, but it at least improves with OFDMA enabled.

Pal6 AP – Average Latency CDF comparison – unlimited throughput – uplink

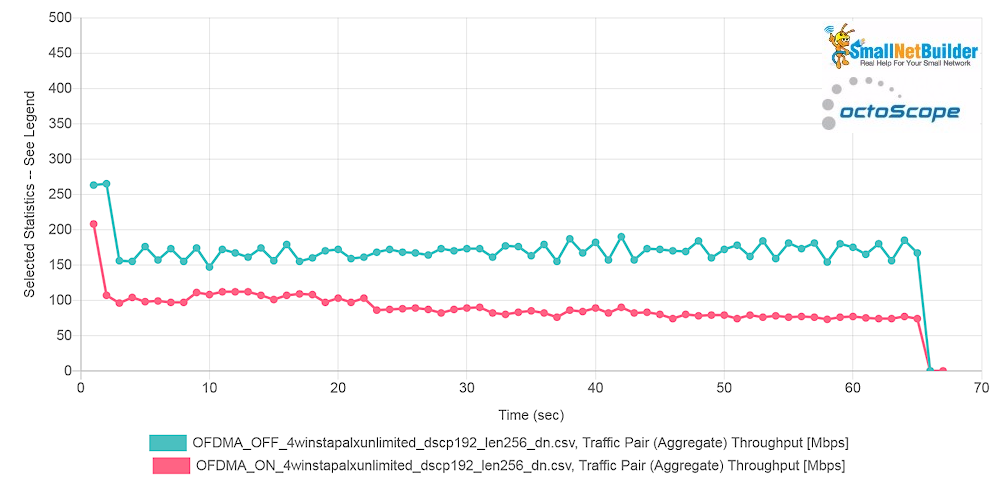

Aggregate downlink throughput also moves in the wrong direction when OFDMA is enabled.

Pal6 AP – Average aggregate throughput comparison – unlimited throughput – downlink

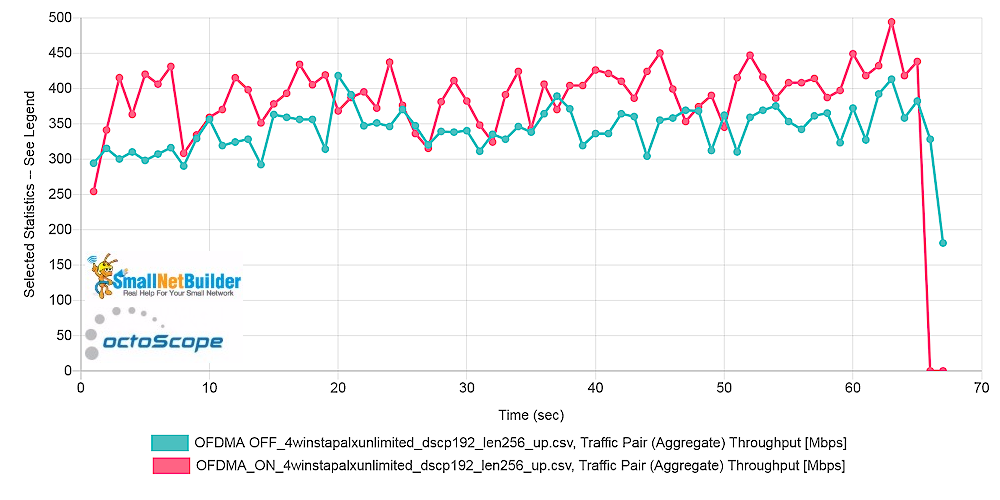

As before with limited bitrate, Uplink throughput shows essentially no gain from OFDMA. But variation is much greater.

Pal6 AP – Average aggregate throughput comparison – unlimited throughput – uplink

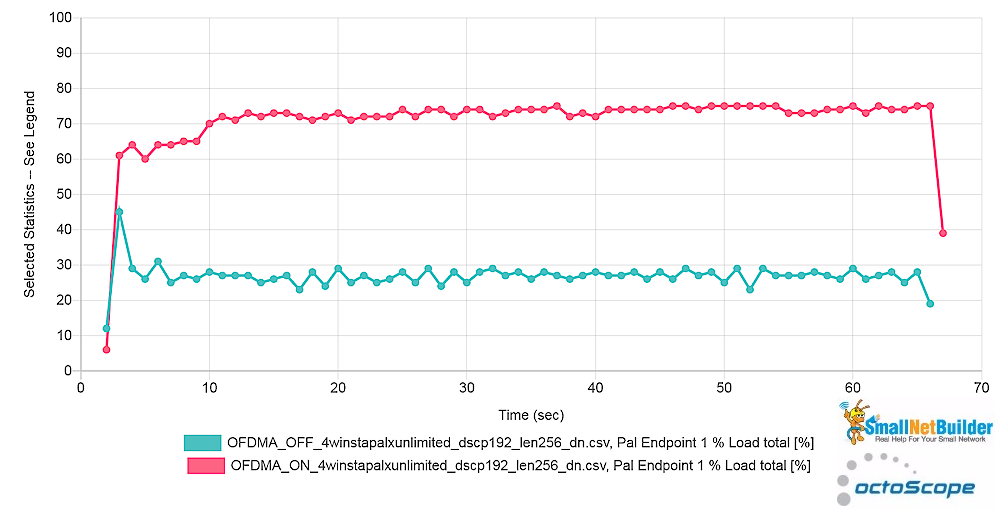

Finally, downlink channel congestion again moves in the wrong direction with OFDMA enabled, more than doubling.

Pal6 AP – Channel congestion comparison – unlimited throughput – downlink

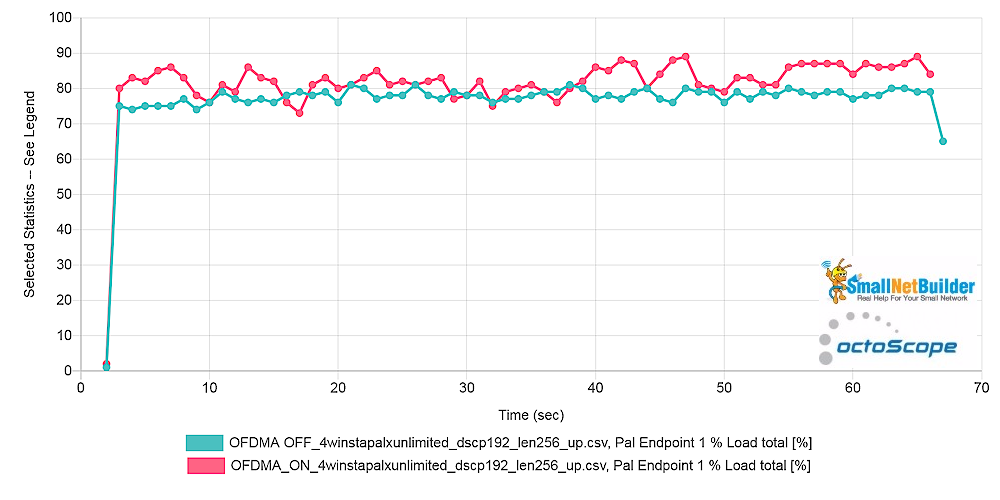

At least uplink channel congestion doesn’t increase as much as downlink does with OFDMA enabled.

Pal6 AP – Channel congestion comparison – unlimited throughput – uplink

I also ran tests setting the per STA bitrate to 5 Mbps and 100 Mbps and saw some benchmarks improve and others degrade with OFDMA enabled. So it appears that this benchmark is not as robust as I’d hoped.

Changing Priorities

Among Flent’s benchmarks are tests that use different traffic priorities, which is a good idea. Theoretically, traffic marked with voice and video should have lower latency than lower priority "best effort" traffic. But will OFDMA help or hurt traffic prioritization?

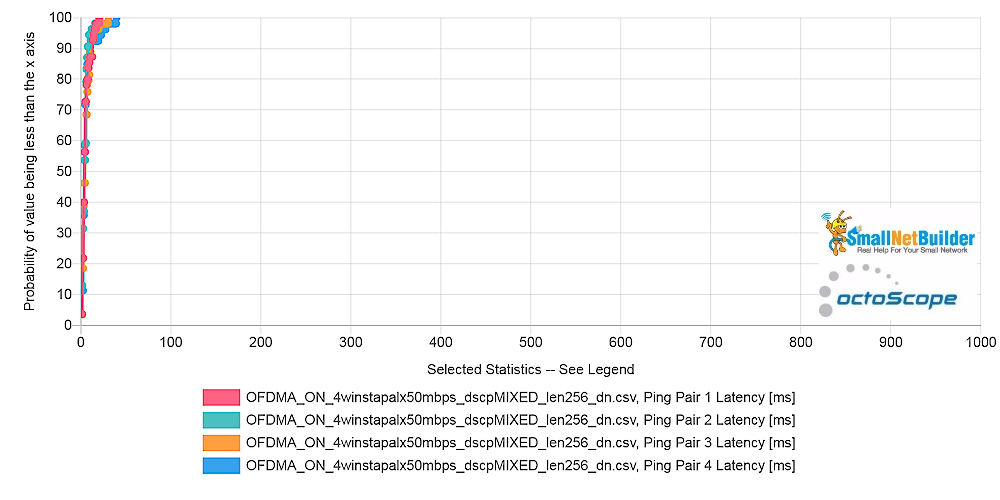

To find out, I assigned each of the four traffic pairs a different traffic priority. One STA got Best Effort CS0 (the default), one got "Excellent Effort" CS3, the third was tagged CS5 Video and the fourth got CS6 Voice.

Another thing Flent RRUL tests generally do not do is limit throughput. I already tried this earlier by setting unlimited bitrate. But setting buffer length also has the effect of capping throughput. So for the next test results, bitrate (iperf3 -b) was unlimited and buffer length (iperf3 -l) was unset.

The traffic priority key in the plots below is:

- Ping Pair 1: CS0 (Best Effort)

- Ping Pair 2: CS3 (Excellent Effort)

- Ping Pair 3: CS5 (Video)

- Ping Pair 4: CS6 (Voice)

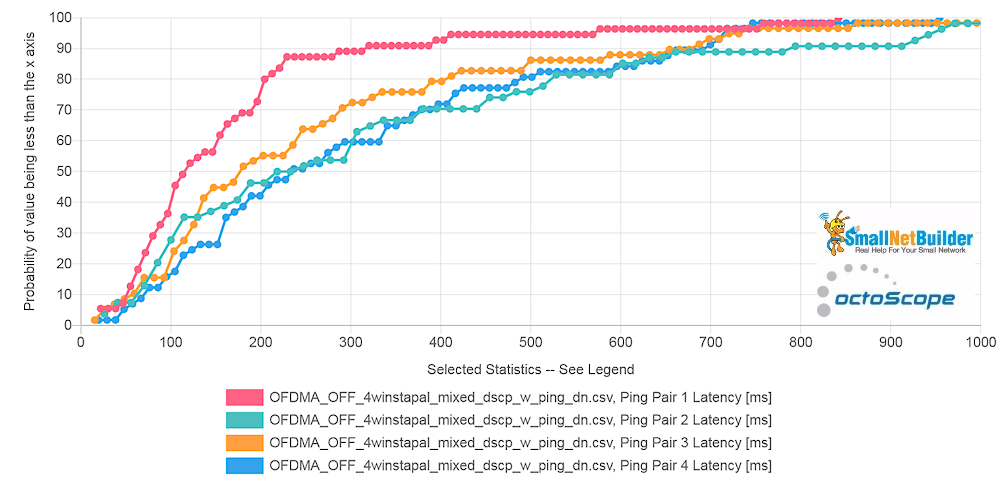

With the channel now fully loaded (congestion = ~ 90 – 95%), latencies increase. But the OFDMA off, downlink plot shows a spread in latencies for the different traffic priorities. Oddly, the lowest priority Best Effort STA has the lowest latency and the highest priority Voice STA has the highest.

Pal6 AP – Latency per STA – downlink – OFDMA off

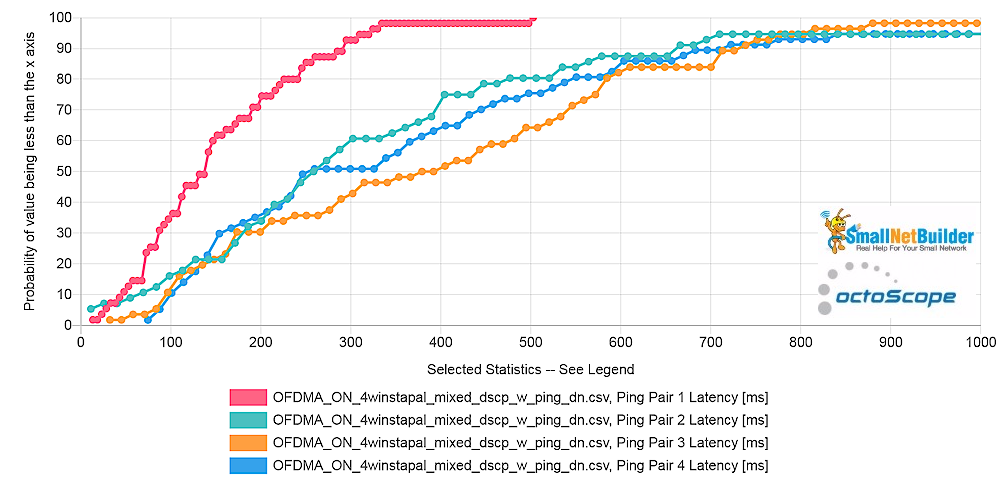

Enabling OFDMA doesn’t produce a dramatic latency reduction. Instead, all STAs move in the wrong direction and the Video STA (Ping Pair 3) overtakes voice to win the prize for worst latency.

Pal6 AP – Latency per STA – downlink – OFDMA on

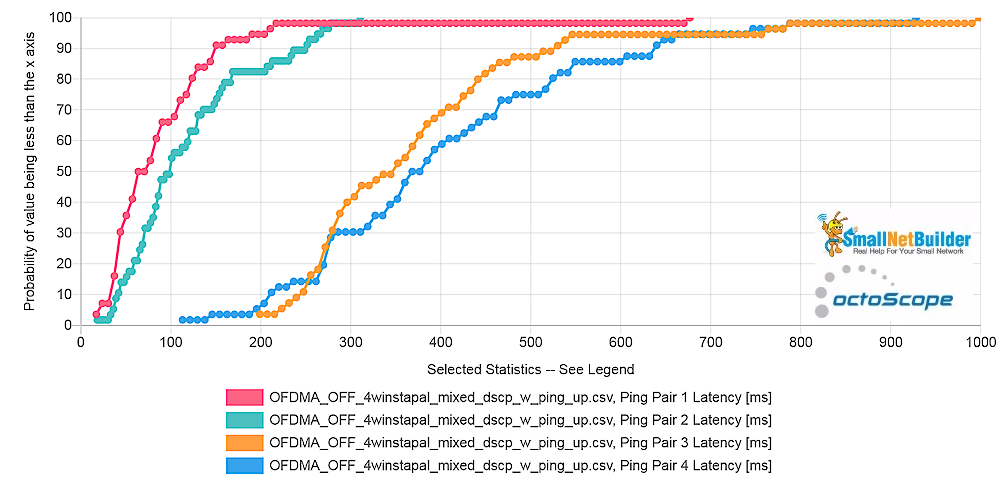

OFDMA Off uplink results are even more interesting. The two lower priority CS0 and CS3 STAs clearly have much lower latencies and lower spread in latencies (the steeper the curve, the lower the value spread) than the "higher" priority CS5 and CS6 STAs.

Pal6 AP – Latency per STA – uplink – OFDMA off

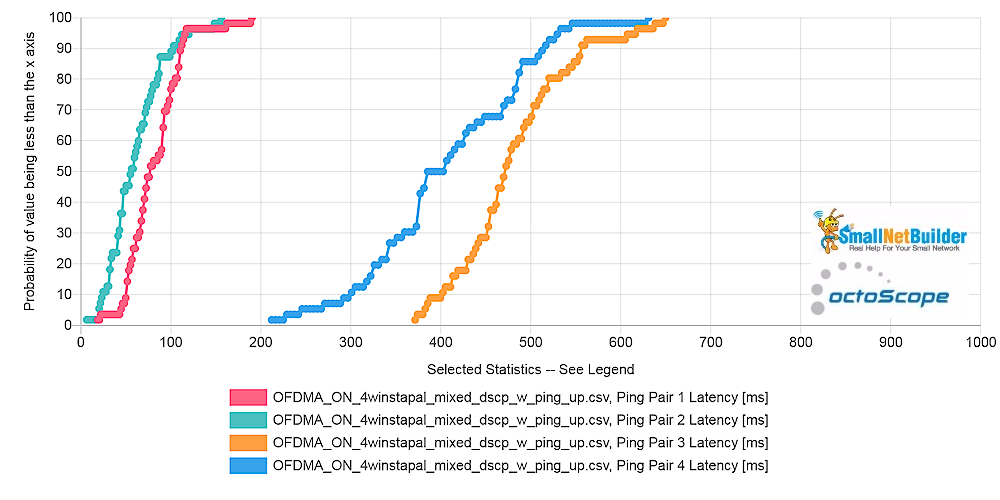

Enabling OFDMA slightly reduces latency for the lower priority STAS, but increases latency for Voice and Video.

Pal6 AP – Latency per STA – uplink – OFDMA on

For reference, here’s a downlink OFDMA off plot with a 50 Mbps bitrate and 256 byte buffer length. Channel congestion in this case averaged around 25%. Even with the horizontal scale expanded, you’d be hard-pressed to see a significant difference in latencies.

Pal6 AP – Latency per STA – downlink – OFDMA off – 50 Mbps bitrate – 256 byte buffer length

Closing Thoughts

I think you can see why I’m well on the way to putting the latest and greatest hype that the Wi-Fi industry has foisted upon us into the same bin as MU-MIMO. Like MU-MIMO, which is also a part of 802.11ax and having some effect on these results, OFDMA is great in theory, but proving extremely difficult to implement in practice.

I can’t say I’m surprised; airtime management is a hellaciously difficult task. And OFDMA only makes it more difficult. For each packet that hits an AP looking to be sent to a waiting STA, the AP must chose among:

- putting it right into the transmit queue

- holding it for aggregation with other packets for that STA

- holding it, with or without aggregation, to be sent with other STA traffic via MU-MIMO

- holding it, with or without aggregation to be sent with other STA traffic via OFDMA

These decisions must be made taking into account the effects of client type mix (a/b/g/n/ac/ax), signal level and physical position and traffic type mix (voice/video/browsing/file transfer/IoT) and load. Like MU-MIMO, OFDMA is likely to take years to get to the point where it actually adds value.

In the meantime, I’m still trying to figure out what to do about OFDMA testing and will present results in Part 2. I’ve run some version of the benchmarks described here on a group of AX routers that I know have OFDMA enabled. And so far, depending on which benchmark settings used, I’ve seen airtime congestion and latency get worse with OFDMA enabled. If anyone out there has any bright ideas, please share!