Introduction

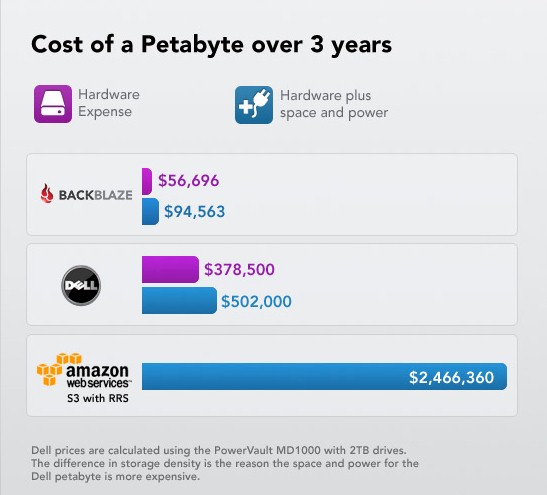

Cloud storage is a tough business with an ever-increasing list of competitors. Staying in business requires not only retaining customers, but attracting new ones and keeping costs in line.

As you might guess, storage costs play a big part. Cloud backup provider Backblaze has always believed they play a major part in its sucess. So much, that they designed their own storage pod that we looked at almost two years ago.

Backblaze recently revealed its second generation design, which doubles capacity and performance while keeping cost about the same. So once again, let’s look at some key takeaways from Backblaze’s experience.

Drives

Drive densities have doubled since 2009, so a key factor in density improvement was the change from 1.5 TB Seagate Barracuda 7200.11‘s (ST31500341AS) to 3 TB Hitachi Deskstar 5K3000‘s (HDS5C3030ALA630). The forty-five drives it takes to fill a "pod" still cost $5,400 ($120 each)!

Backblaze has had a lot of experience with evaluating drives and living with the consequences of its decisions. Fortunately, they have chosen drives wisely enough that only one employee is needed to maintain old pods and add new ones. Backblaze says that only one day a week is needed to replace drives that have gone bad out of the 9,000 drives currently spinning away in its datacenter.

Infant mortality is the biggest issue, so Backblaze burns new pods in for a few days before starting to load customer data. Ten drive fails a week works out to a 5 % / year fail rate across the entire drive population. The failure rate for the newer Hitachi Deskstar 5K3000 drives is less than 1%.

Backblaze asserts that it has yet to see drives die of old age. As a result, virtually all drive failures are in-warranty (3 years), with no-cost replacements (aside from shipping). This is part of the "secret sauce" that keeps Backblaze’s costs so low.

Pod Design

Because the number of drives didn’t change, Backblaze was able to keep the same 4U red sheet metal box used for the first generation pod. This allows them to mix old and new pods in the same chassis racks. Due to increased storage density, it now takes only three-quarters of a single rack cabinet to store a Petabyte.

Yo Ho Ho. 15 Petabytes In A Row

Other Changes

Other changes in the new pod are summarized in the table below.

| Component | Old | New |

|---|---|---|

| CPU | Intel E8600 Wolfdale @ 3.33 GHz | Intel i3 540 @ 3.06 GHz CPU |

| Motherboard | Intel BOXDG43NB LGA 775 G43 ATX | SuperMicro MBD-X8SIL-F-B |

| SATA Cards | 2 port – Syba SD-SA2PEX-2IR PCI Express SATA II Controller Card (SiI3132) (Qty 3) 4 port – Addonics ADSA4R5 4-Port SATA II PCI Controller (SiI3124) (Qty 1) |

Syba PCI Express SATA II 4 x Ports RAID Controller Card SY-PEX40008 (Qty 3) |

| RAM | Kingston KVR800D2N6K2/4G 2x2GB 240-Pin SDRAM DDR2 800 (PC2 6400) (Qty 1) | Crucial CT25672BA1339 2GB, DDR3 PC3-10600 (4x 2GB = 8GB total) |

| Boot Drive | Western Digital Caviar WD800BB 80GB 7200 RPM IDE Ultra ATA100 3.5" | Western Digital Caviar Blue WD1600AAJS 160GB 7200 RPM |

| Power Supply | Zippy PSM-5760 Power Supply (760W) | Zippy PSM-5760 Power Supply (760W) |

Although the new motherboard has two Gigabit Ethernet ports, Backblaze doesn’t use the second one. They don’t need either redundancy or a bigger pipe to the pods (remember that all the data is coming via relatively slow Internet uplinks). The additional RAM enables larger disk caches that speed up some disk I/O.

The first generation storage pod, used a mix of PCIe and PCI SATA cards due to board selection and motherboard slot count. The new four-port PCIe SATA cards fit in the three motherboard PCIe slots and eliminate the old PCI bottleneck.

File System and OS

Filesystem selection generates a lot of discussion in the SNB NAS DIY forum. Backblaze moved from Linux 64-bit Debian 4 to Debian 5, but no longer uses JFS as the file system, instead switching to ext4. The explanation:

We selected JFS years ago for its ability to accommodate large volumes and low CPU usage, and it worked well. However, ext4 has since matured in both reliability and performance, and we realized that with a little additional effort we could get all the benefits and live within the unfortunate 16 terabyte volume limitation of ext4.

One of the required changes to work around ext4’s constraints was to add LVM (Logical Volume Manager) above the RAID 6 but below the file system. In our particular application (which features more writes than reads), ext4’s performance was a clear winner over ext3, JFS, and XFS.

Update 7/22/11 – I saw questions about ZFS in Backblaze’s post comments and wasn’t disappointed when the question was raised in this SNB Forum thread. Here’s what Backblaze has to say on ZFS:

We are intrigued by it, as it would replace RAID & LVM as well. But native ZFS is not available on Linux and we’re not looking to switch to OpenSolaris or FreeBSD, as our current system works great for us. For someone starting from scratch, ZFS on one of these OSes might work and we would be interested to know if someone tries it. We’re more likely to switch to btrfs in the future if anything.

Final Thoughts

A lot of the heavy design lifting had been done for the first generation pod design. So all Backblaze had to do in the second generation was to not break the stuff that worked and change the things that needed improvement. To that end, it looks like Backblaze has achieved its goals. Same power consumption, same cost, twice the storage and twice the performance looks to me like a job well done.

As with generation 1, Backblaze offers the storage pod design free of any licensing or any future claims of ownership. So if you’re interested, hit the latest Backblaze blog post and get the updated parts list and the original post to get the assembly instructions and tips.