Introduction

Updated 5/27/2008: Added Madal performance graphs

As I noted in a blog piece last month, Bill Meade’s Build a Cheap and Fast RAID 5 NAS How To has continued to be one of our most popular articles, even though now it’s almost two years old. I asked readers to tell me about RAID 5 NASes that they had built and a few people responded in enough detail to put together this article. So here we go.

Robert’s NAS

Reader Robert responded with the most detail and provided the following description and pictures of his NAS:

There are a number of reasons for building my own NAS and not buying one off the shelf. First off, I wanted to build a system where I had the ability to customize everything to my needs and desires. Secondly, by building my own NAS I was able to keep myself open for scalability and turning the system into a multipurpose system (expansion).

I wanted a server with redundancy that would work for me and have the ability to store my large video portfolio and pictures on a system that would still be intact in case a drive died. I also wanted a secondary system that I could use to encode video to different formats or pictures in large batch processing.

The video encoding didn’t need to be super fast, so I didn’t put in a powerful CPU. My main focus was to free up my main computer to play games on or any other task I needed to work on.

One of the reasons that I wanted to do my own NAS was to be able to research each part individually. I purchased parts over a period of two weeks by waiting for parts to go on sale at my local electronics store. While I understand that I could have saved money by buying online, I felt that by getting things on sale with rebates, in the long run, I was able to comparable prices.

Secondly, by purchasing from stores who have ads stating that they will match a sale price with 30 days of purchase from any competitor, I was able to save a little more (and just had to keep a sharp eye out for sales). Here are the parts I ended up with:

| Component | Cost | |

|---|---|---|

| Case | Cooler Master 690 | $40 (sale price) |

| CPU | Athlon X2 BE-2300 | $90 (combo deal w/ mobo) |

| Motherboard | ECS GeForce6100SM-M | (see above) |

| RAM | 2GB OCZ Reaper 4-4-4-15 | $45 (sale price) |

| Power Supply | Thermaltake | $40 (sale price) |

| Ethernet | 10/100 (included in motherboard) | (included in motherboard) |

| RAID Controller | Promise Tech FastTrakTX4310 | $170 |

| Hard Drives | Seagate SATA300 500GB (x4) | 4 x $90 = $360 |

| OS HD | WD 80GB SATA300 | $43 |

| OS HD bay | Not specified | $12 |

| OS | WIN XP Pro | $0 (transferred license) |

| Total System Cost | Total: ~$800 | |

Robert’s NAS

Case: I purchased this case because I liked the cooling and the ability to easily mount hard drives. The mounts have rubber pads that do a good job at dampening vibration. The downside is that there are only 5 bays for drives and they are closer than I would like them to be.

So I stacked drives as 2 drives, space, 2 drives, hoping this will give decent air flow. For the primary OS drive I purchased a mobile drive bay online. It was cheap but it does the job even though I don’t actually need to hot-swap it.

Power supply: The PS was on sale and I felt that from an online tutorial for building your own NAS that 500W would be good enough.

CPU & Mobo: I felt that these two products would serve me sufficiently for now. While the processor is not the most powerful, I felt that it did a good job with handing the processing that I needed to get done on the server. Buy buying them in a combo I was saving about $30 which seemed like a nice move.

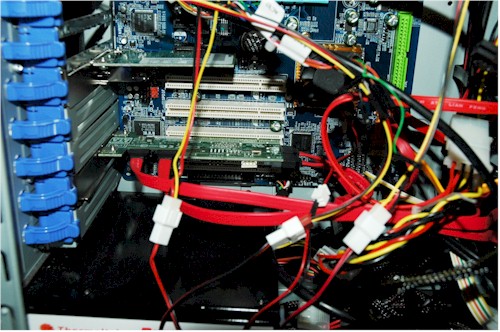

RAID: I researched RAID cards for a long time. In the end, I wanted a card that was from a decent company and supported at least 4 SATA drives. It would have been fun to buy one of the $600+ cards. But for my needs I felt that the TX4310 would do the job. I’m sure I would have saved a bit if I had purchased it online, though.

RAID Card installed

Robert’s NAS – more

HD: I have always liked Seagate and Western Digital drives, with the main advantages for the Seagate being price and 5 year warranty. In the past, I seem to have had problems having drives die on me, so I felt that having a 5 year warranty would be handy in the long run.

OS: I had an old out-of-commission computer with a copy of Windows XP Pro so I used that for my OS. I know that my system would be much more efficient if I was using a Linux distribution. But I still have not gotten around to learning the ins and outs of the OS. Hopefully, I can get onto that sometime in the near future.

The system was pretty easy to put together. I had little to no trouble installing parts, since I have put together a number of computers in the past.

After about two weeks of use, I decided that the onboard 10/100 LAN port was not doing the job so I went out and got a D-Link DGE-560T gigabit NIC. I chose it because it supports jumbo frames and because I have had good luck with Marvell. The card cost approximately $40.

Lessons Learned

After about a month of steady use the system decided to die due to the failure of the ECS motherboard. At the start, I knew that ECS made terribly cheap mother boards. I had never used one before, but everyone I knew who owned one didn’t like it and were plagued with random restarts. In spite of the warnings however, I took my chances and failed. Of course I was unable to return the board to the store.

So I went online and bought a board Gigabyte GA-M61P-S3 from Newegg. (This is the board you see in the pictures if you are wondering.) The new board cost me $75, bringing the total cost of my NAS to $875.

The good news is that even with the motherboard swap, when I booted the system the RAID array came right up with no problems. I think this is due to using the Promise RAID controller instead of software-based RAID.

Since I’m not familar with benchmarking software I didn’t run any on the NAS. I don’t have any complaints about performance other than noticing a slight slowness in writing from a computer over the network to the NAS.

I’m really glad that I put this NAS together, as the project and experience overall was a good one and also educational. From here, I feel like this system will give me a good reason to learn some kind of Linux distribution and possibly make it double as a low level web server.

The only major frustration was when the ECS motherboard died on me. I tried to RMA the board to ECS, but never received a response and their web site at the time was poorly structured.

Madwand’s NAS

Reader Madwand had this to say about RAID 5 NAS construction:

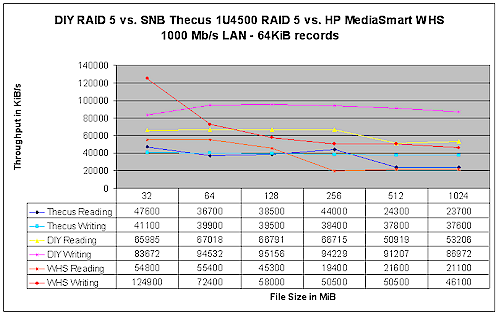

The SNB DIY RAID 5 and all the consumer NAS boxes perform much slower than what can be achieved these days, as illustrated by the sample performance chart below.

RAID 5 NAS Performance Comparison

The chart shows the performance of a simple DIY build I made using three-drive on-board RAID 5, on-board nVIDIA NIC (without jumbo frames) under Windows XP Home (OS on separate drive), which I measured using the same methodology as used by SNB.

This build was based on a now-dated Asus A8N-VM CSM with an X2 3800 configured to use a single core and 512 MB RAM.

The chart includes a couple of the higher-performing devices as benchmarked by SNB to make the following point.

Once you get into a NAS design, you quickly find that you have a ton of choices, and there is no single best choice for any of them. That is, it’s really up to you how you design your NAS.

People get hung up on "server" terminology, box cosmetics, power consumption or other factors. But the reality is that anything goes — from single-drive off-the-shelf boxes to the simple XP Home / on-board RAID and NIC that I used for the above example to ZFS and much more complex configurations. By the way, automatic sleep modes can also do wonders for power consumption these days.

Anything goes as well for pricing. Whether it’s $500, $1000 or higher depends on what you want to spend. It’s better to build the device to fit your needs and desires than to a price point, since the NAS will be a long-term central device of some importance to the household.

Madal’s NAS

The last example comes from reader Madal:

I first built a Windows XP box using the behemoth Lian-Li V2100 case (12 x internal 3.5 drives. Frankly, I have no idea why I bought it at the time! I thought it looked cool and aptly named it "The Monolith" for the purpose of archiving my 2000+ CD collection as 320 Kbps MP3s.

Madal’s “Monolith”

I bought a 320 GB WD for the job, and threw in whatever other drives I had laying about. About 4 months later, that brand new HD died, and I had not backed it up, thinking "well, it’s a new drive….." Famous last words. Four months of ripping, and 120 gigs lost. Never again. Nor will I ever buy another WD drive.

Then I set about my quest. I bought 400GB Seagates when they were sale priced @ $110 or less from Fry’s, and acquired enough drives, with spare parts, to build this:

| Component | Notes | |

|---|---|---|

| Case | Lian-Li V2100 | |

| CPU | AMD Athlon 64 3000+ | |

| Motherboard | Asus A8V-Deluxe | IDE x3 + SATA x4 onboard |

| RAM | 2GB | |

| Power Supply | Thermaltake 750W | |

| Ethernet | 10/100 (included in motherboard) | (included in motherboard) |

| Drive Controllers | Promise Tech | SATA x4 |

| Silicon Image (SIIG) IDE PCI Card | IDE x2 | |

| Hard Drives | – Seagate 400GB Barracuda 7200.9 (IDE) – Seagate 400GB Barracuda 7200.10 (SATA) |

– 2x IDE, 6x SATA configured in RAID 5 |

| – Seagate 300GB Baracuda 7200.9 (IDE) | – 2x IDE in RAID 1 for OS | |

| OS | Debian "Lenny" | |

Madal’s NAS Parts

I tried a number of OSes, including FC6 and Suse 10.1, but I could not make them work. I think the problem with that GRUB would look for the MBR on a drive other than the one the BIOS designated as the 1st HD, but I never could figure it out. I did get FC6 to format my RAID 5 array in ext3 until I could find an OS to make it all work. The only OS that let this monster fly was Debian, the "Swiss Army Knife" of Linux.

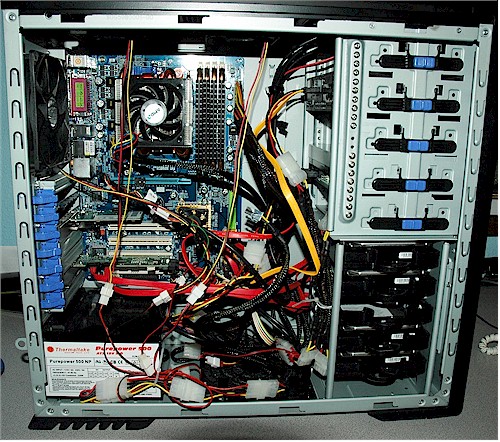

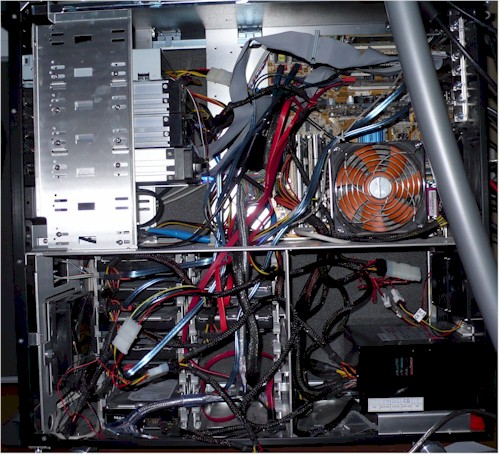

Inside Madal’s “Monolith”

I had thought about FreeNAS, but they were having problems with geom vinum volume manager and RAID 5 at that time (I think these problems have since been ironed out).

Note that I have no hardware RAID controllers operating. It’s all done through the software magic of mdadm. For whatever reason, I was never able to get GRUB to boot the RAID1 array, I had to use LILO.

I also had problems with a Promise Tech IDE card (PDC 20268 chipset). The kernel constantly reported latency problems. But I have had no problems since switching to a Silicon Image (SIIG) card. Also, the hard drives will run very hot (up to 50C), so I have to leave the side cover off the box.

Updated 5/27/2008

I ran iozone on "Monolith" and plotted the results along with the Synology DS508 data taken from the NAS Charts. Keep in mind that two different systems were used to take the data, so the results aren’t totally apples-to-apples.

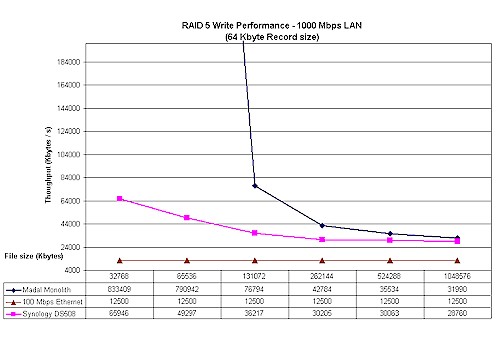

Madal RAID 5 NAS Write Performance Comparison

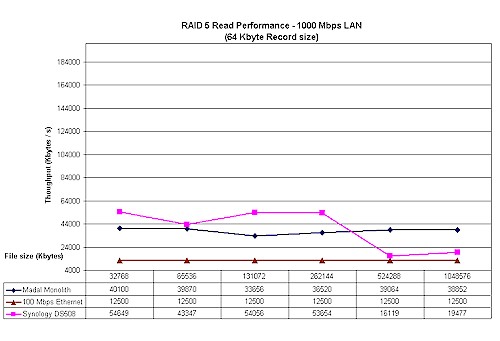

Write performance is literally off the chart for file sizes below 128 MB, due to the large amount of RAM in my NAS. Read performance isn’t that much different than the DS508’s, except where my NAS takes the lead for the 512 MB and 1 GB file sizes.

Madal RAID 5 NAS Read Performance Comparison

Lessons Learned

Given the recent price drops in pre-built NASes, I would say it is worth the hassle if:

1) You build for fun and enjoy the challenge, like I did. I had a lot of fun building it, and trying to get the pieces of the puzzle to fit.

2) You want more than 4 drives and/or 3TB of RAID5, given current drive sizes.

3) You have enough spare parts lying around to do it.

4) You want to learn Linux (or Sun or BSD or how to hack XP for RAID5)

I didn’t use hardware RAID because hardware RAID boards cost a fortune, even the ones I found on e-Bay. And the cheaper ones are limited to usually 4 drives. Given what I did, with mdadm, there is really no limit to the number of drives that can be used: just throw in another PCI SATA card + drives and mdadm –grow it.