| At a glance | |

|---|---|

| Product | Super Flexible SW Super Flexible File Synchronizer () [Website] |

| Summary | Super Flexible File Synchronizer is a front end to both Amazon S3 and Google Docs to use as cloud storage and sync options. It also supports several other Internet technologies like FTP, SSH, and WebDav. |

| Pros | • Robust feature set. • Decent wizard for setup. • Free Linux edition. • Inexpensive depending on use case. |

| Cons | • Documentation is lacking for amount of features. • Standard edition doesn’t offer any option to sync to a network server. • Website tough to navigate • Client GUI makes finding certain features difficult. |

Typical Price: $0

|

|

||||||||||||||||||||||||||||||||||||||||||||||||

At SNB/SCB, we tend to steer away from products with the adjectives “Super” and “Flexible” in the name. Usually that means the marketing people have gone wild and just started coming up with stuff to mask an otherwise bad product.

In Super Flexible File Synchronizer’s case (SFFS for my fingers’ sake), that is thankfully not the case. SFFS was a reader’s recommendation as a replacement for Crashplan, and after looking it over, it definetly has some benefits that make it a contender in the cloud storage space.

SFFS supports Windows, OS X, and Linux. The product has two versions: Standard and Pro. Standard cuts back on many features such as any Internet-based protocols for sync (SSH, FTP, WebDav, S3), compression, running as a service, Real Time Sync (folder monitoring), and several others. In short, if you’re interested in cloud backup/storage, stick with the Pro Edition.

Neither edition is expensive, but honestly the licensing structure is confusing. I’ve made a table below to illustrate the different license types and how they mesh with the different product versions. The licensing is all “seat” oriented. This means it can be installed on multiple computers, but only used by as many people as you purchase licenses for.

| Single OS License | Combined OS License | |||

|---|---|---|---|---|

| Standard | Pro | Standard | Pro | |

| Single License | $34.90 | $59.90 | $39.90 | $69.90 |

| Family Pack 5 Computers |

$59.90 | $99.90 | $69.90 | $119.90 |

| Business License 5 Computers |

$119.90 | $199.90 | $139.90 | $199.90 |

| Home Business 2 Users over 5 Computers |

$89.90 | $149.90 | $104.90 | $169.90 |

Just a little confusing, yeah?

An exception here is Linux. For whatever reason, SFFS Pro on Linux is free, no strings attached. This makes setting up a Linux file server with a decent backup solution rather inexpensive.

Installation, Setup, and In Use

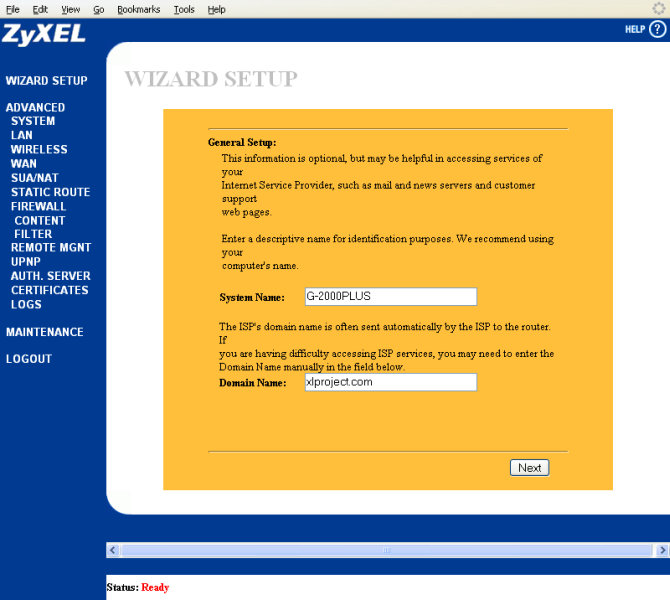

Installation is standard for whatever system you’re on. You have the option in Windows of installing just the Remote Service, which I will talk about more in a minute. Once installed, SFFS opens in a Wizard Mode, which helps walk you through seting up a new profile. You can see the wizard in the Gallery, and overall it was a good thing to have.

I decided to sync into Amazon’s S3 to test the cloud storage functionality. With the recent introduction of the 5 GB Free storage option, this could be a compelling option for people looking to back up some data using SFFS as a nice S3 front-end.

Setting up an Amazon S3 account is really simple, and involves setting up an AWS account. I’m not going to go in-depth into the process of S3 setup, but account setup is quite easy (name, credit card, address, etc.). Once that’s been verified, S3 requires a dump area called a “bucket” to be created to hold your data. You can then create a folder structure inside that bucket.

Once bucket set up is complete, you can start the wizard process in SFFS. First you need to select the folder you want synced. Multiple folders can be synced by creating multiple “profiles”, which is what is created at the end of the wizard process. After selecting the folder, you then select the folder sync destination

The wizard that greets you when you first launch SFFS.

First step is to figure out what to sync and where.

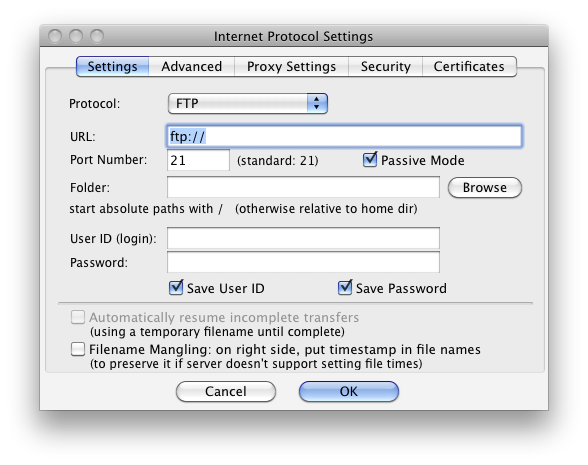

Here are the specific settings for S3.

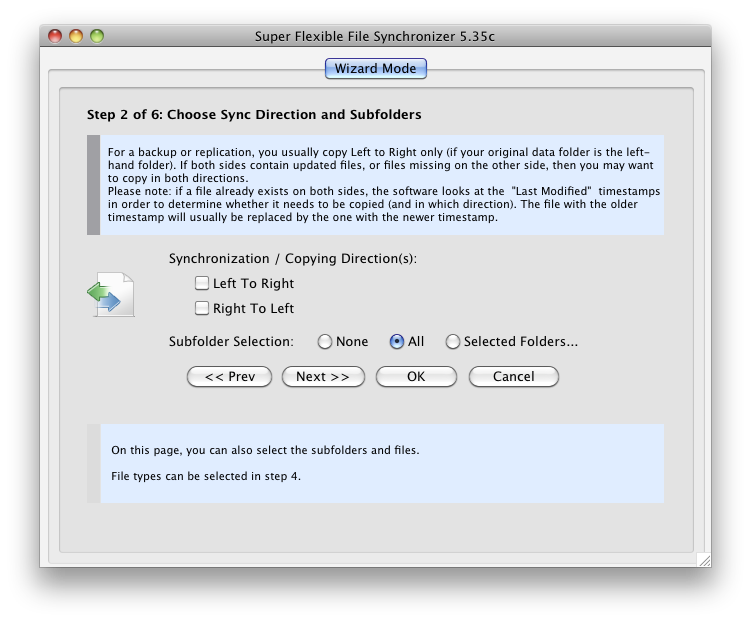

Second step is to determine sync direction. This is important for zipping / unzipping.

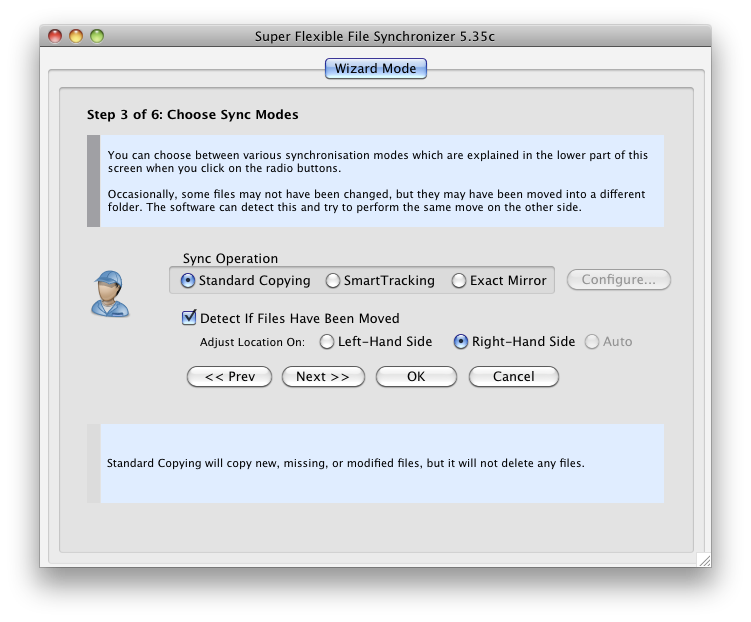

Step 3 determines the sync mode.

Step 4 sets up file inclusions and exclusions by type.

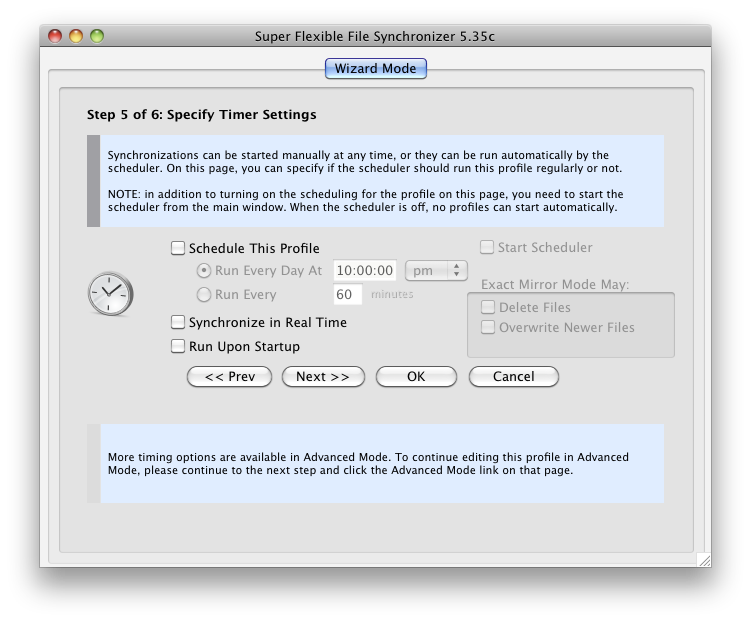

Step 5 is where you set the schedule.

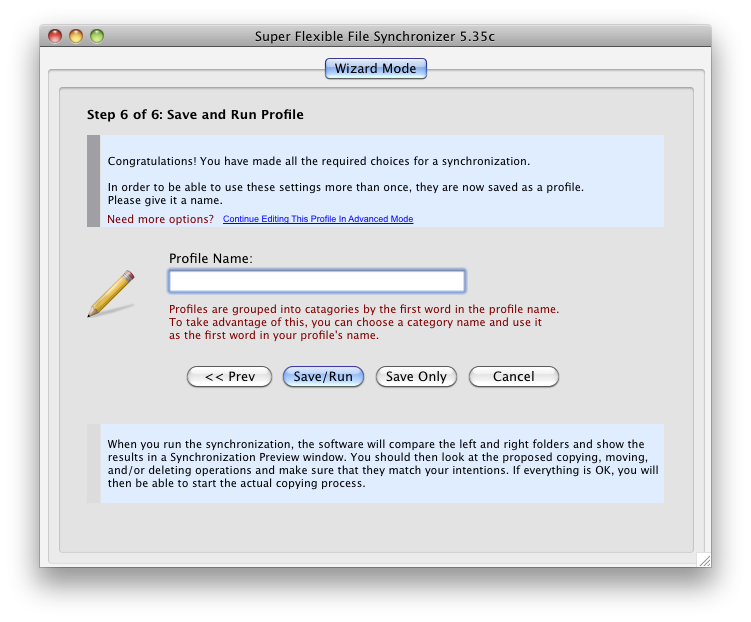

Step 6 saves the profile and runs it if you want.

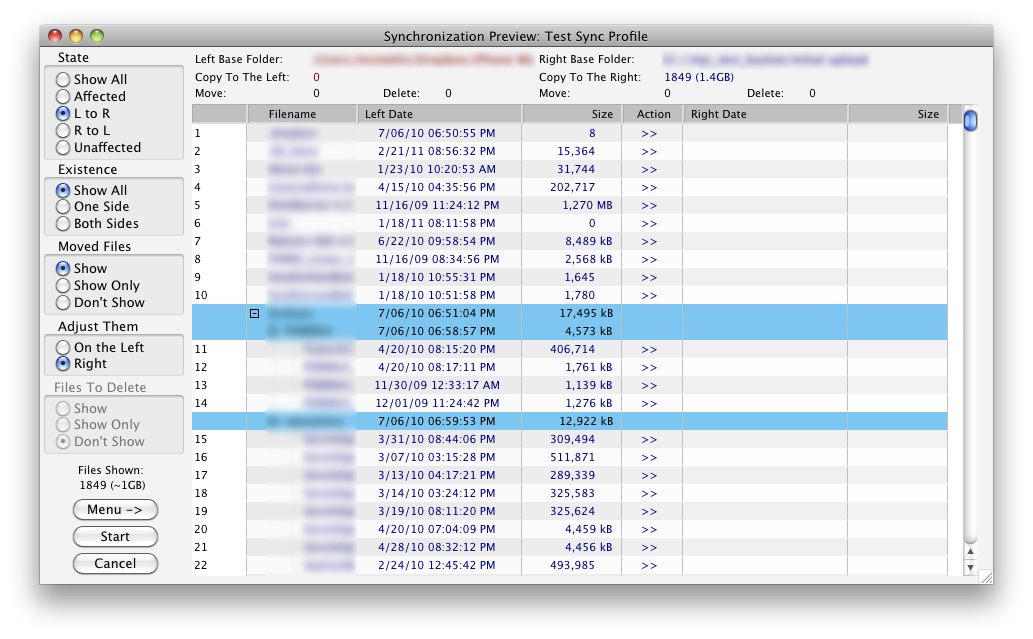

A view of what a sync preview looks like.

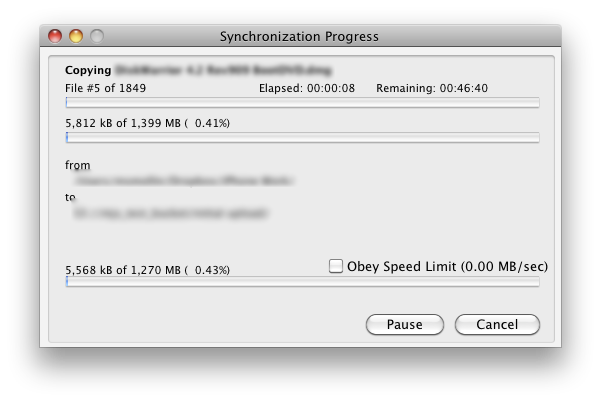

Sync processing

What advanced view looks like.

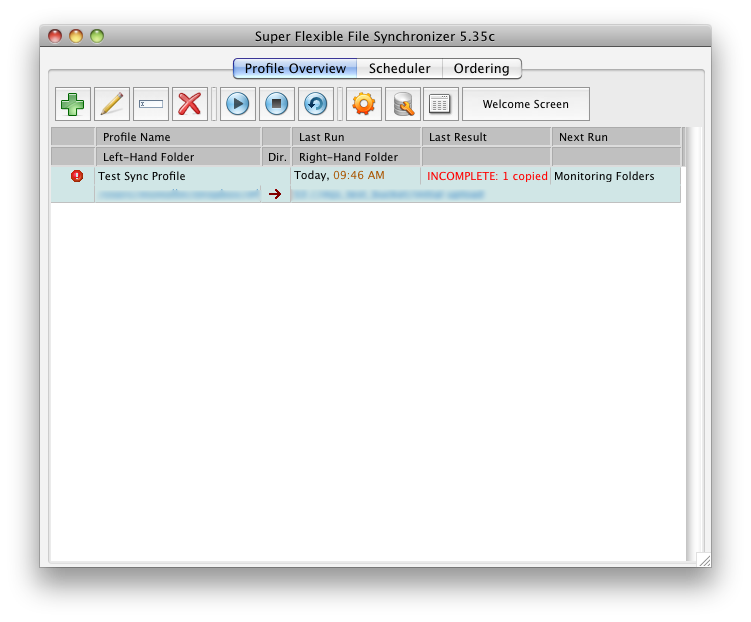

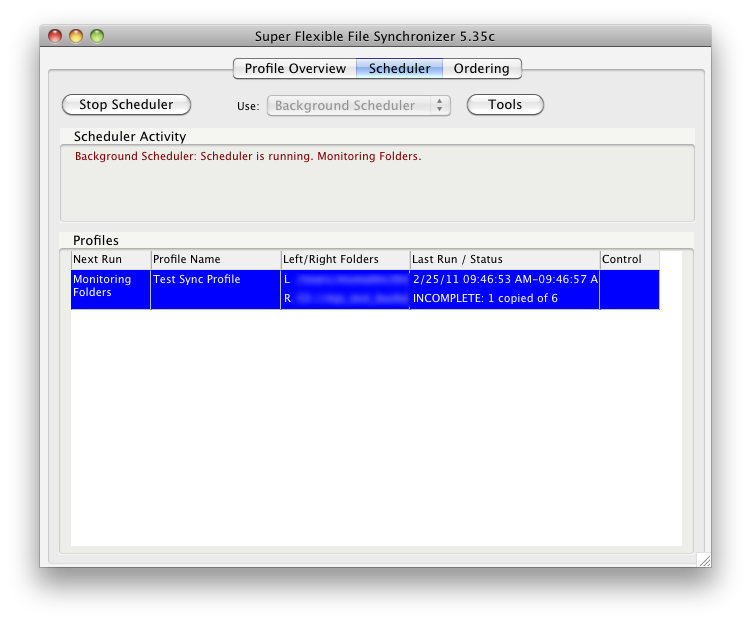

The scheduler for different profiles

Since we’re using S3, you will need your private key / secret combo which is automatically generated by AWS, or you can have it generate a new one for you. Once that’s entered into SFFS, you can connect and select the remote destination.

You can also choose reduced redundancy here, which is significantly cheaper but not replicated among as many data centers. Understand that reduced redundancy is still guaranteed 99.99% durable and available, whereas regular S3 is designed to provide 99.999999999% durability and 99.99% availability of objects (aka files).

For backups, reduced redundancy is probably best as you will usually still have local copies if the rare case happens that Amazon loses a file. You can read the full explanation of S3’s durability here.

Next you choose the sync direction.. “Left to Right” roughly equates to Source to Destination. In our case, that’s computer to S3. This is important if you later decide to set up ZIP compression or encryption. By choosing the “Right to Left” or both ways, you will be able to decrypt / unzip files that are sync’d in. Obviously ZIP files can be decompressed using any number of utilities, but SFFS makes it easy by automating the process.

Next you choose a sync mode. Standard copying is like it sounds: it copies files but won’t mirror deletions from the source. SmartTracking adds some logic around standard copying where SFFS will try to figure out what happened and update each side accordingly. SmartTrack is required if you decide to do a 2-way sync between computers, because you can define a set of rules for handling deletions. Finally, Exact mirror will mirror file changes regardless of what the change is.

Step 4 of the wizard is to set up wild cards if you want to back up only certain file types, or exclude certain file types. This is useful if you are backing up a heavily used folder and only want PDF and DOC files backed up from it. It can also prevent accidental file changes like moving a large movie file into a folder that gets synced.

Step 5 determines the schedule to sync. You can choose to have it run only at a certain time of the day, run on a periodic schedule, or have it continuously sync changes to a folder. I chose to continuous sync since that’s most like other cloud storage solutions. However if you are using it for backup, setting a daily schedule might make the most sense.

Finally you save the profile, and depending on if you wanted the job to run scheduled, the scheduler starts and will execute the job. The first time a job executes, you get a preview of what the job will be syncing.

I found that SFFS was a little slower than DropBox at syncing files into S3. When backing up the same folder set, Dropbox maxed out my connection and was steady at 24 Mbps, whereas SFFS couldn’t maintain that throughput. I assume this is because DropBox’s rsync-based backend is more efficient at streaming files than SFFS, but it’s a minor quibble.

SFFS can do so much that I can’t cover the entire feature set, but I will touch on some of the finer features. The file monitoring works well, and nearly instantly synced up files. ZIP Compressions is very configurable and can be configured to zip individual files or larger collections (which helps streamline uploading).

Compression has a number of options, with the default being “Zip Compatible AES-256”. I would recommend just leaving that, as any of the other settings aren’t as secure or aren’t as useful.

The schedule can be extremely granular. If you want to run jobs at different times on different days of the week, it can be done. Just make sure not to twist yourself into a management pretzel with the scheduler options.

The Remote File Service is a Windows-only feature that starts a service on the remote machine which will handle generating a file list and maintaining file locations and deletions. This is nice when you just want to have a server that houses all your synced folders but doesn’t need a full copy of SFFS. As such it won’t count against your licenses. This remote file service works mostly with FTS/FTPS, but can also work with SSH with some configuration.

Access, Support, and Security

|

|

||||||||||||||||||||||||||||||||||

Accessing your files is dependent on your remote storage. In my case of Amazon S3, I have access via S3’s interface, or any S3 client. Also, S3 offers an AWS Import/Export service where you ship them a hard drive, and they will dump files onto it for you, or upload files on it to a designated bucket. This way you can move files quickly into the system, and the service is rather inexpensive at $80 per device, and $2.49 per hour the data is loading. It also saves on all the transfer costs.

Support is through email or a forum community. The Developer is extremely active on the forums, more active that I honestly have ever seen a developer. It’s really good to see, and he is very open to making changes / additions to the software based on user requests if possible. Phone support is also available. But the developer is open in saying it is not any faster than emailing or posting in the forums.

Security is in the hands of the user. You have to enable AES encryption, which is something I would probably put in the wizard. There are so many options in SFFS that it can be difficult to find what you are looking for.

Overall SFFS is a great utility for cloud storing files. It’s a little daunting to navigate, and could do with a UI/UX makeover. But the features work as intended, and the free edition for Linux means anyone could set up a nice linux server and have it handle off-site backups quite easily.

Whether or not this is a decent replacement for Crashplan is dependent partially on knowledge, time, and patience. SFFS can do everything that Crashplan can when Amazon S3 is used. SFFS takes much longer to set up and understand, though.

However, the overall costs associated with SFFS + S3 are much lower than Crashplan’s current Pro solution, and will be what I recommend to my client if the promised “Crashplan for SMB” product doesn’t deliver satisfactorily.