Introduction

In Part 1, I provided some background on WMM and showed the client and AP controls that enable it. This time, I’ll show evidence that WMM can provide traffic prioritization, based on IxChariot tests. The not-so-simple story that I alluded to regarding Step 3 of the WMM Checklist (The Source Application supports WMM) will have to wait for Part 3. I’m doing some fact-checking and it is taking some time for all of the fact-checkers to get back to me.

Testing for WMM

As usual, I turned to IxChariot to see if I could find evidence of WMM in action. My Ixia contact provided a special QoS Template definition file that contained 802.1d QoS templates that I needed to set the correct Service Quality in the WMM IxChariot test files. This file was installed in the IxChariot Program Files directory and required an IxChariot restart.

The folks at the Wi-Fi Alliance were also helpful in providing an excerpt from the WMM Test Plan that gave me some important clues on setting up Windows and IxChariot for proper QoS tagging.

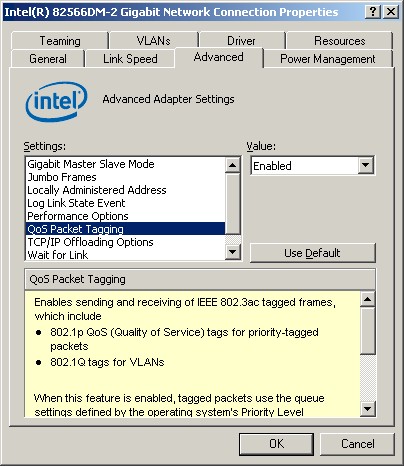

I first had to enable QoS Packet Tagging for the Ethernet NIC in the IxChariot console machine (Figure 1) so that the tagged frames that IxChariot created, actually got sent.

Figure 1: Enabling QoS Packet Tagging

Even with the hints from the Wi-Fi Alliance document and my Ixia contact, I had to do a lot of experimenting to find the right combination of settings in the test notebooks, IxChariot console machine and three copies of Windows. The WFA document also contained numerous Windows Registry changes that I had to sort thrhough.

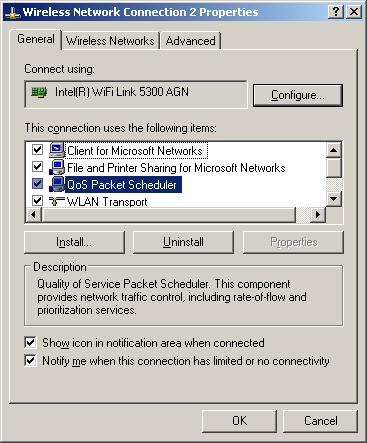

In the end, only one change was essential for enabling WMM handling in Windows XP. I had to create a new DisableUserTOSSetting=0 DWORD key in HKEY_LOCAL_MACHINE\SYSTEM\CurrentControlSet\Services\Tcpip\Parameters and reboot each machine. I also had to re-enable the QoS Packet Scheduler on all network adapters used in the testing.

Figure 2: QoS Packet Scheduler enabled

QoS Packet Scheduler is usually enabled by default on all network adapters. But since I’m paranoid and don’t want any unknown processes futzing with my performance measurements, I had disabled it on all my machines. Note that Vista uses a very different set of QoS mechanisms, as described in this very helpful Microsoft TechNet article.

The test setup was simple. The IxChariot console was run on an XP SP3 system connected via Ethernet to a D-Link DIR-655 router, which, as previously noted, is WMM Certified. Two wireless clients with WMM-certified adpaters were used as the test endpoints. One was a Fujitsu P7120 Notebook with an Intel PRO/Wireless 2915ABG running Windows XP SP2 and the other a Dell Mini 12 with an Intel WiFi Link 5300 AGN substituted for the factory adapter and running Windows XP SP3.

Once I got Windows correctly configured, I started experimenting with IxChariot scripts. This was a bit tricky since I was dealing with two unknowns, i.e. the IxChariot script settings and products with unverified WMM support. But with lots of IxChariot runs and help from my Ixia and Wi-Fi Alliance WMM gurus, I was able to come up with a working script.

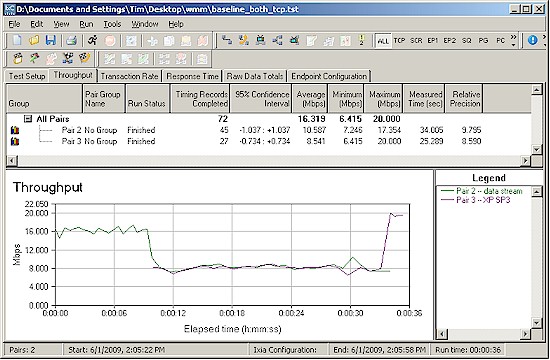

I first, however, did a baseline run with two TCP/IP streams running to the two wireless clients. The results shown in Figure 3 were expected, with both clients sharing the 16 Mbps or so of wireless bandwidth.

Figure 3: Baseline run

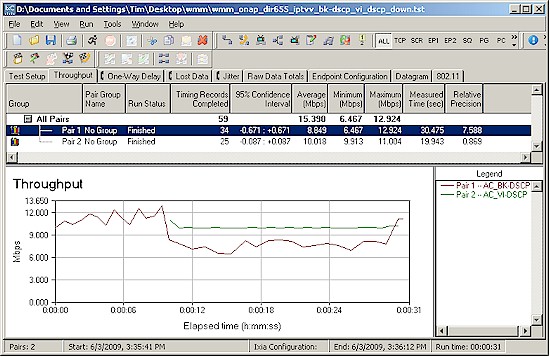

I then set up an IxChariot test using two IPTVv scripts, which simulate Cisco IPTV MPEG Video streams. The only script parameter defaults changed were the send data rate and file size. Both scripts used the RTP protocol and the Service Quality for one stream was set to use the AC_BK-DSCP QoS Template, which is "background" or lowest priority, as you might remember from Table 1 in Part 1.

The other script’s Service Quality was set to AC_VI-DSCP, which is the WMM "Video" priority. This is higher than the Background rate, but below the highest "Voice" rate. I used these two Service Qualities because they were used in the example plot in the WMM white paper and shown in Figure 1 in Part 1. I set the send file size to 1,000,000 Bytes to smooth out the plots and the send rate to 15 Mbps for the BK stream and 10 Mbps for the VI stream.

When I ran this test with WMM enabled in the DIR-655, I was rewarded with the plot shown in Figure 4, which shows the DIR-655 and the two Intel-based clients successfully supporting WMM with both streams running downlink.

Figure 4: Successful DIR-655 WMM test

The "background" tagged stream starts first and properly falls back to allow the "Video" stream its full 10 Mbps bandwidth when it starts running 10 seconds into the script. You can also see the "background" stream throughput rise at the end of the run when the "Video" stream stops before it.

So this means that WMM can actually provide its advertised benefits of giving properly-tagged real-time traffic priority over less critical traffic. In Part 3, I’ll have more test results to show what I found that works and what doesn’t. And I hope to have some definitive answers on whether you can actually find media servers that support WMM tagging.